Data Alignment

When we talk about "data alignment" in data engineering, we might be referring to one of three things.

- Arranging data elements in memory for more efficient execution

- Aligning or realigning datasets to maintain a coherent data structure

- Transforming datasets to 'align' with specific logical or business rules

Data alignment definition 1: arranging data elements in memory

The first use of the term "data alignment" refers to the arrangement of data elements in memory to optimize performance and memory usage. It ensures that data is stored in memory in a manner that is suitable for efficient access and processing, so this is a very valuable practice in data engineering and optimizing data pipelines, especially at scale.

In Python, data alignment typically applies to data structures such as arrays, structures, or objects. The alignment rules specify how individual data elements within these structures are positioned in memory. The alignment can affect the size and layout of the data, as well as the speed of accessing and manipulating it.

Alignment is often dictated by the hardware architecture and the specific data types being used. For example, some processors might require data to be aligned to specific byte boundaries—such as 4- or 8-byte alignments—for optimal performance.

Python provides certain tools and libraries, such as the struct module, to control the alignment of data structures. These tools allow developers to define and manipulate data structures with specific alignment requirements, ensuring compatibility with the underlying hardware and optimizing performance as needed.

In a high-level language like Python, data alignment isn't directly addressed in the code, but it's a principle that the language design, underlying libraries, and hardware may utilize to optimize performance and memory usage. Nevertheless, we can illustrate the concept of data alignment with a contrived example, specifically by considering how to use Python's NumPy library, which is frequently employed in data engineering tasks.

NumPy is known to organize arrays in a highly efficient manner for vectorized operations. This is due to the data alignment of the underlying C data structure used in NumPy's implementation. Therefore, understanding and leveraging NumPy's data arrangement can lead to more efficient memory usage and performance in Python.

Here's a simple example of how you might create and manipulate data in a NumPy array, illustrating a performance enhancement due to efficient data alignment:

A (somewhat contrived) example of the benefits of data alignment using NumPy:

Please note that you need to have the necessary Python libraries installed in your Python environment to run this code.

import numpy as np

import time

# Create a large list with a million elements

list_data = [i for i in range(10**6)]

# Create a NumPy array from the list

np_data = np.array(list_data)

# Measure the time to square all elements in the list

start_time_list = time.time()

list_data_squared = [i**2 for i in list_data]

end_time_list = time.time()

# Measure the time to square all elements in the NumPy array

start_time_np = time.time()

np_data_squared = np_data**2

end_time_np = time.time()

print(f"Time taken to square a list: {end_time_list - start_time_list} seconds")

print(f"Time taken to square a numpy array: {end_time_np - start_time_np} seconds")

When you run this code, you'll find that squaring each element in the NumPy array is considerably faster than squaring each element in the list. This difference is partly due to how the data in the NumPy array is aligned in memory, making it more efficient for this type of operation.

Here is the output on my machine (an M1 Mac) - an improvement of 147 X:

Time taken to square a list: 0.20438814163208008 seconds

Time taken to square a numpy array: 0.0013937950134277344 seconds

Again, it's not possible to directly control data alignment in Python code. Still, it's crucial to understand how the language and libraries you use handle data alignment so you can design your code to take advantage of it. That's an integral part of designing efficient data pipelines and other data engineering tasks and is valuable for your depth of knowledge as a data engineer.

Data alignment definition 2: ensuring merged datasets have consistent dimensions.

When working with data, we are often required to merge two datasets, but these may have different dimensions.

The term "Dataset dimensions" typically refers to the structure or shape of the dataset. In most contexts, it refers to the number of rows and columns that the dataset has. But in multi-dimensional datasets (such as those used in machine learning or complex statistical analysis), "dimensions" can also refer to the number of features or variables that each observation has, even if these don't correspond to literal columns in a two-dimensional table.

So in this specific context, the act of aligning the data might involve one of several things.

It might mean ensuring that each data frame has the same columns. In this case, you would add missing columns to the data frames that don't have them, likely filling in null or default values. Alternatively, you could remove extra columns from data frames that have more than the ones you're aligning to.

Or it might require ensuring that each data frame has the same number of rows. This might involve appending rows with null or default values, or it might mean sampling a subset of rows from a larger data frame. If the rows represent different observations, you'd need to ensure that the same observations are present in each data frame, which could involve a complex process of data integration.

The benefits of aligning your data:

Investing time in data alignment ensures that the data is high quality, fit for purpose, and trusted. On a more simple level, you often will run into blockers if you don't align your data prior to analysis (as in, your analysis won't work).

- Establishing clear data lineage: Use Python's logging module to log the source and destination of data at each stage of the pipeline. This helps to establish clear data lineage and provides traceability in case of errors or inconsistencies. A tool like Dagster provides rich metadata and data lineage information.

- Ensuring data consistency: You will run into lots of frustrations working with inconsistent data. Use Python's Pandas library to ensure that the data is consistent in terms of data types, formats, and naming conventions. For example, you can use the pandas.dataframe.astype function to convert columns to the appropriate data type, and the "rename" function to standardize column names.

- Normalizing data: Use Python's Pandas library to normalize data into a consistent and standardized format. For example, you can use the

groupbyfunction to group data by a specific column and theaggfunction to perform aggregation functions on the grouped data. - Cleaning and preprocessing data: Use Python's Pandas library to clean and preprocess data. For example, you can use the

fillnafunction to fill missing values, and theapplyfunction to perform custom transformations on the data. - Validating data: Use Python's assert statement to validate data. For example, you can use the assert statement to ensure that certain columns have specific values or that certain conditions are met.

- Using appropriate alignment techniques: Use Python's Pandas library to align data using appropriate techniques such as merging, joining, and concatenating. For example, you can use the

mergefunction to merge two dataframes based on a specific column and theconcatfunction to concatenate two dataframes. - Automating the alignment process: Use Python's scripting capabilities to automate the alignment process. For example, you can create a Python script that reads in data from a source, performs the necessary transformations and alignments, and writes the results to a destination.

Example of data alignment (definition 2) in Python using Pandas

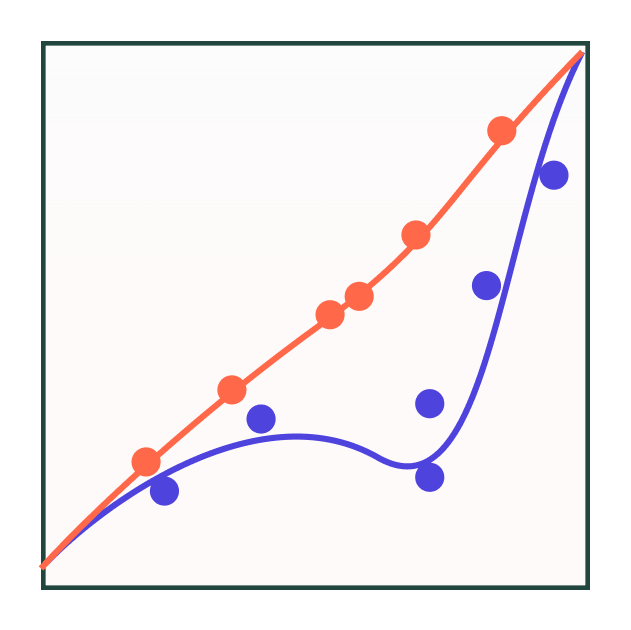

Data alignment is intrinsic, which means that it's inherent to the operations you perform. So when you perform an operation between two DataFrame or Series objects, Pandas will align data in them by their labels (index for Series and DataFrame, and columns for DataFrame), not their position.

For example, consider the case where we have two dataframes df1 and df2, and we want to add them together. Even if the rows in df1 and df2 are in different order, Pandas will take care of aligning them according to their labels (i.e., index and column names) when performing the addition operation. If there are labels present in one dataframe but not in the other, the resulting dataframe will contain those labels with a value of NaN ("Not a Number").

Here's an example of such data alignment in Python using the Pandas library.

About the Pandas align() function:

The align() function in Pandas is explicitly used to align two data objects with each other according to their labels. The function align() can be used on both Series and DataFrame objects and returns a new object of the same type with labels compared and aligned. Read the Pandas docs on the align function here.

The basic syntax is:

s1, s2 = s1.align(s2, join='type_of_join')

The join parameter accepts one of four possible options: 'outer', 'inner', 'left', 'right'. The 'outer' join type, for instance, would ensure that the resultant data includes the union of all labels from both inputs, filling with NaN for missing data on either side.

Our align() example:

Here's an example of how the align() function can be used. Suppose we have two data frames, df1 and df2 , with different shapes and some overlapping columns:

import pandas as pd

import numpy as np

df1 = pd.DataFrame({

'A': [1, 2, 3],

'B': [4, 5, 6],

'C': [7, 8, 9]

})

df2 = pd.DataFrame({

'A': [10, 11],

'B': [12, 13],

'D': [14, 15]

})

We can align the data frames along their index using the align() function as follows:

df1_aligned, df2_aligned = df1.align(df2, fill_value=np.nan)

print(df1_aligned)

print(df2_aligned)

This will create two new data frames, df1_aligned and df2_aligned, with aligned indexes and matching columns:

df1_aligned:

A B C D

0 1.0 4.0 7.0 NaN

1 2.0 5.0 8.0 NaN

2 3.0 6.0 9.0 NaN

df2_aligned:

A B C D

0 10.0 12.0 NaN NaN

1 11.0 13.0 NaN NaN

Now the data frames have matching shapes and NaN values in the columns that didn't exist in the original data frames. This now allows us to perform operations on the aligned data frames, such as adding them together:

df_sum = df1_aligned + df2_aligned

print(df_sum)

This will give us a new data frame, df_sum, with the sum of the aligned values:

df_sum:

A B C D

0 11.0 16.0 NaN NaN

1 13.0 18.0 NaN NaN

2 NaN NaN NaN NaN

As you can see, the aligned data frames allow us to perform operations on data sets that might not have originally had the same shapes or column names. As always, this is a simplistic example, but hopefully illustrative of the process.

Data alignment definition 3: meeting business rules

Aligning data can also be defined as adjusting data to conform to business rules, policies, and regulations. In this context, "data alignment" refers to the process of ensuring that the data collected, stored, and processed within an organization adheres to the specific requirements and guidelines set by the business or governing bodies.

This type of data alignment involves validating, cleansing, and transforming data to meet the desired standards and comply with relevant regulations. It may include tasks such as data normalization, data cleansing to remove inconsistencies or errors, and applying data transformation rules to ensure consistency and compatibility across different systems or processes.

Data alignment in the context of business rules, policies, and regulations is crucial for maintaining data integrity, accuracy, and compliance. It ensures that the data used for decision-making, analysis, reporting, and other business operations is reliable, consistent, and meets the necessary requirements defined by the organization or external entities.

Clean or Cleanse

Cluster

Curate

Denoise

Denormalize

Derive

Discretize

ETL

Encode

Filter

Fragment

Homogenize

Impute

Linearize

Munge

Normalize

Reduce

Reshape

Serialize

Shred

Skew

Split

Standardize

Tokenize

Transform

Wrangle