Break down the silos between data engineering and BI tools

Traditionally, there's been a wall between data and BI tools, resulting in a lack of visibility into data dependencies and lineage from upstream data sources to downstream BI dashboards and views. This often results in stale insights, inaccurate reports, and redundant work, which lowers the quality of decision-making and slows down business productivity.

What's causing it?

The traditional silos that exist between data engineering and BI tools, make it difficult to trace data from raw sources to the BI dashboards that drive business decisions.

Announcing the New Dagster + Sigma Integration

To break down the traditional barriers between data engineering and BI tools, Dagster Labs and Sigma Computing have launched a powerful new integration that enables data teams to easily track, orchestrate, and analyze their data flows end-to-end.

Now generally available (GA), this integration creates a unified lineage from upstream data managed in Dagster to BI insights generated in Sigma, ensuring data flows smoothly across all stages of the pipeline.

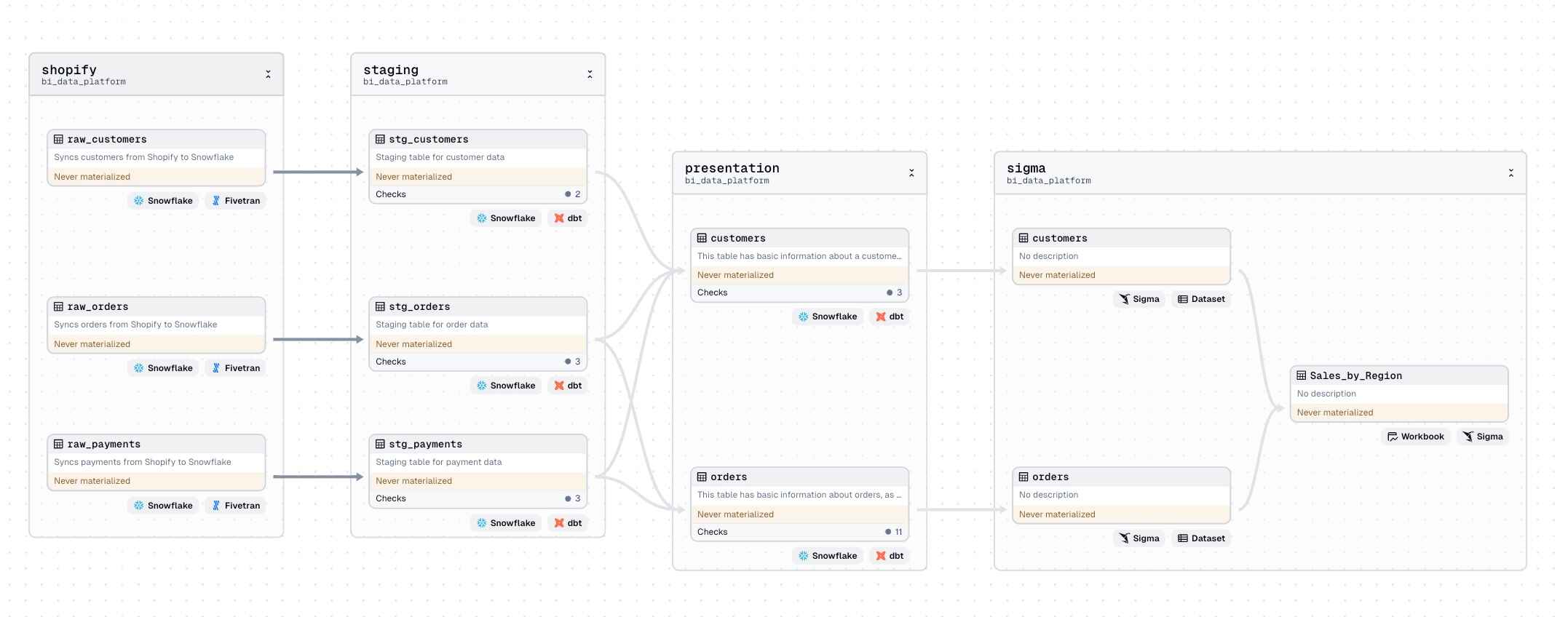

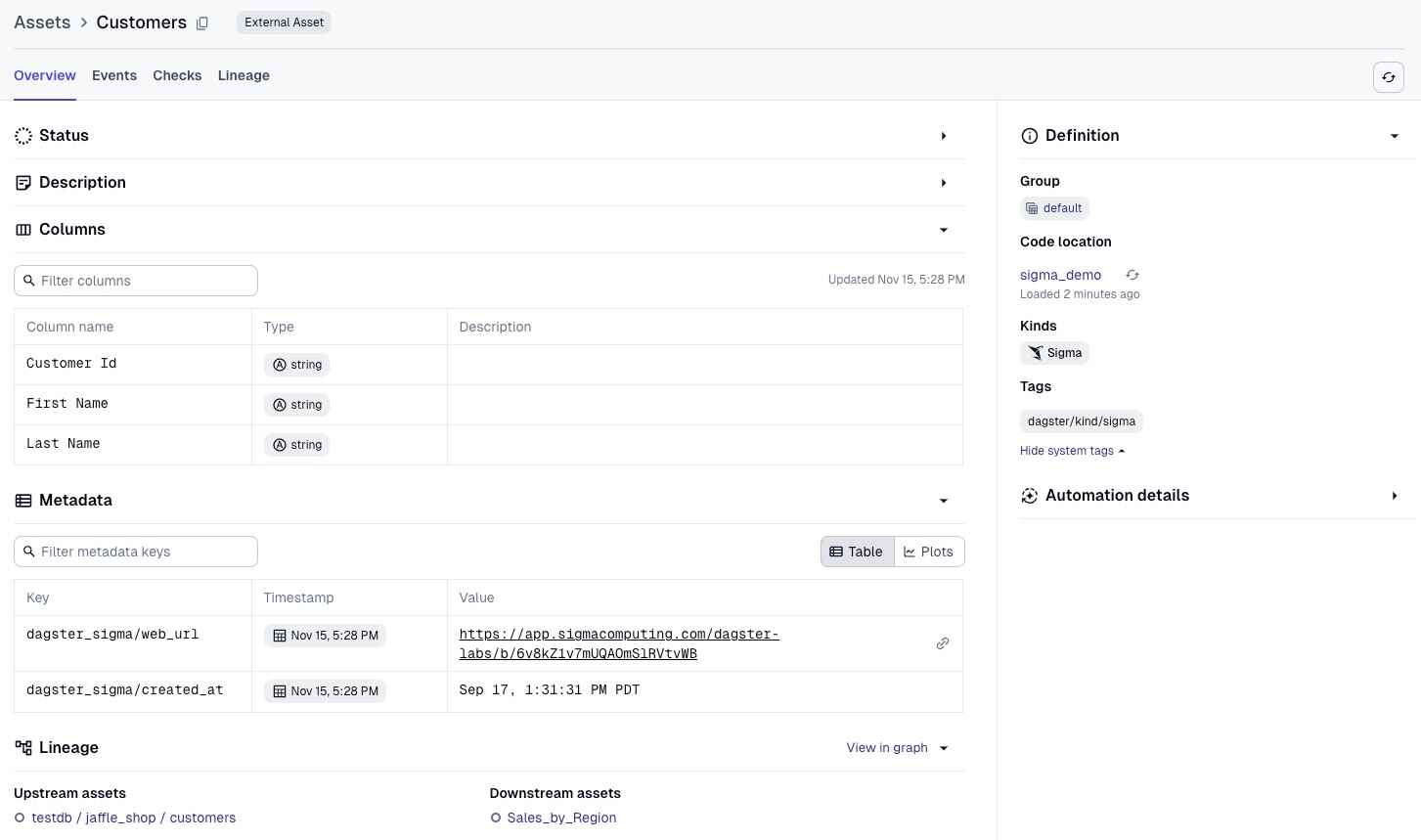

With Sigma, your datasets and workbooks are assets in the Dagster asset graph. Now, data teams using this integration can easily track dependencies and lineage between Sigma and upstream Dagster-managed data. In turn, that helps them gain a comprehensive view of data pipelines that now include BI assets - letting data platform teams understand, monitor, and manage their data flows with unprecedented visibility and control.

Here's what you can expect:

- Improved Data Lineage and Visibility: By representing Sigma objects in Dagster's asset graph, data teams gain a comprehensive view of their data flows. This makes it easy for data engineers and business analysts to track how data moves from its source through transformation stages to the dashboards and reports that drive business decisions.

- End-to-End Data Pipeline Orchestration: With Sigma integrated into the Dagster environment, users can build complete end-to-end pipelines encompassing data processing and analytics. This means upstream data changes can automatically trigger updates in Sigma, ensuring that BI insights remain accurate and timely without manual intervention.

- Enhanced Collaboration Across Teams: The integration fosters collaboration between data analysts and upstream practitioners by providing a shared view of data lineage and dependencies. When changes occur upstream, analysts can quickly assess potential impacts on Sigma reports and dashboards.

Key Benefits

By connecting Dagster's orchestration power with Sigma's user-friendly analytics and BI platform, data teams can unlock a range of benefits:

- Operational Efficiency: Automating the refresh and orchestration of Sigma BI assets reduces manual processes, leading to more efficient workflows and up-to-date analytics.

- Greater Scalability: The integration's end-to-end pipeline visibility and automated updates support data platform scalability, allowing teams to build a unified data ecosystem that grows with their needs.

- Centralized Data Management: For data platform teams, Dagster becomes a one-stop shop for managing, monitoring, and orchestrating data assets, including those used in Sigma's analytics and BI platform.

Practical Use Cases

- For Data Engineers: Data engineers can use this integration to create pipelines that cover the entire data journey, from ingestion to transformation to Sigma dashboards. This setup allows them to trigger automatic Sigma dashboard refreshes in response to upstream changes.

- For Analysts and Business Users: With data flowing smoothly from Dagster's pipelines into Sigma's workbooks and dashboards, analysts can be confident that their reports are always based on the latest data. This minimizes the risk of stale insights and ensures business users make decisions based on accurate, up-to-date information.

How It Works

The Dagster + Sigma integration is built to fit easily into existing workflows by representing Sigma data models and workbooks as assets in the Dagster asset graph. This setup provides a live view of data dependencies and relationships across the entire data lifecycle, allowing easier tracking and management.

Dagster uses assets to represent Sigma data models and workbooks, linking them to upstream data pipelines managed in Dagster. As a result, changes in data sources automatically flow through to Sigma, allowing BI dashboards to stay in sync without manual updates or redundant processes. All that is needed for the automated end-to-end experience is that materializations must first be created in the Sigma workbook.

How to Get Started

Getting started with the Dagster + Sigma integration is straightforward.

Simply install the dagster-sigma package, configure it to connect with your Sigma organization, and you're ready to start orchestrating Sigma assets alongside the rest of your data platform.

For more details, please refer to the setup doc, or the Dagster Sigma API documentation.

Try it out today, experience the power of orchestrated business intelligence, and tell us what you think!

.jpg)

.png)

.jpg)

.png)