Powering data platforms that drive results

Dagster+ Pro equips your data team to deliver real business results: faster time-to-insight, reduced compute costs, and a modern platform that grows with your AI and analytics strategy.

Build the foundation for AI, innovation, and growth

Don’t settle for half-baked solutions—whether it’s a legacy orchestrator that drags velocity down, a home-baked tool that needs constant maintenance, or a vertical solution that creates more silos than it solves. Dagster+ Pro is unified, future-proof platform built to support real collaboration, reliability at scale, and rapid delivery across every team and use case.

Endless maintenance on brittle, hand-rolled orchestration

No visibility into what ran, what failed, or why

Pipelines that don’t play well with your growing stack

Too many tools duct-taped together with too little trust

Security and compliance requirements slowing everything down

Developers stuck debugging when they should be building

Enterprise-grade orchestration without the overhead

Dagster+ Pro was built for organizations like yours. Whether you’re a Fortune 500 enterprise running mission-critical workloads, a high-growth team modernizing your stack without re-platforming, or a company prioritizing developer productivity, data governance, and cost control, Dagster+ Pro was built for you.

Accelerate strategic initiatives

Time is money, and Dagster+ Pro helps you win back both. Ship faster, improve stability, and move from backlog to production. A Forrester TEI study found enterprises unlocked $1.7M in faster time-to-value over three years.

Optimize cost, improve efficiency

Legacy tools and ad-hoc orchestration strategies are quietly costing you. Dagster+ Pro replaces manual work, fragmented tooling, and runaway compute with a centralized, efficient, and testable system.

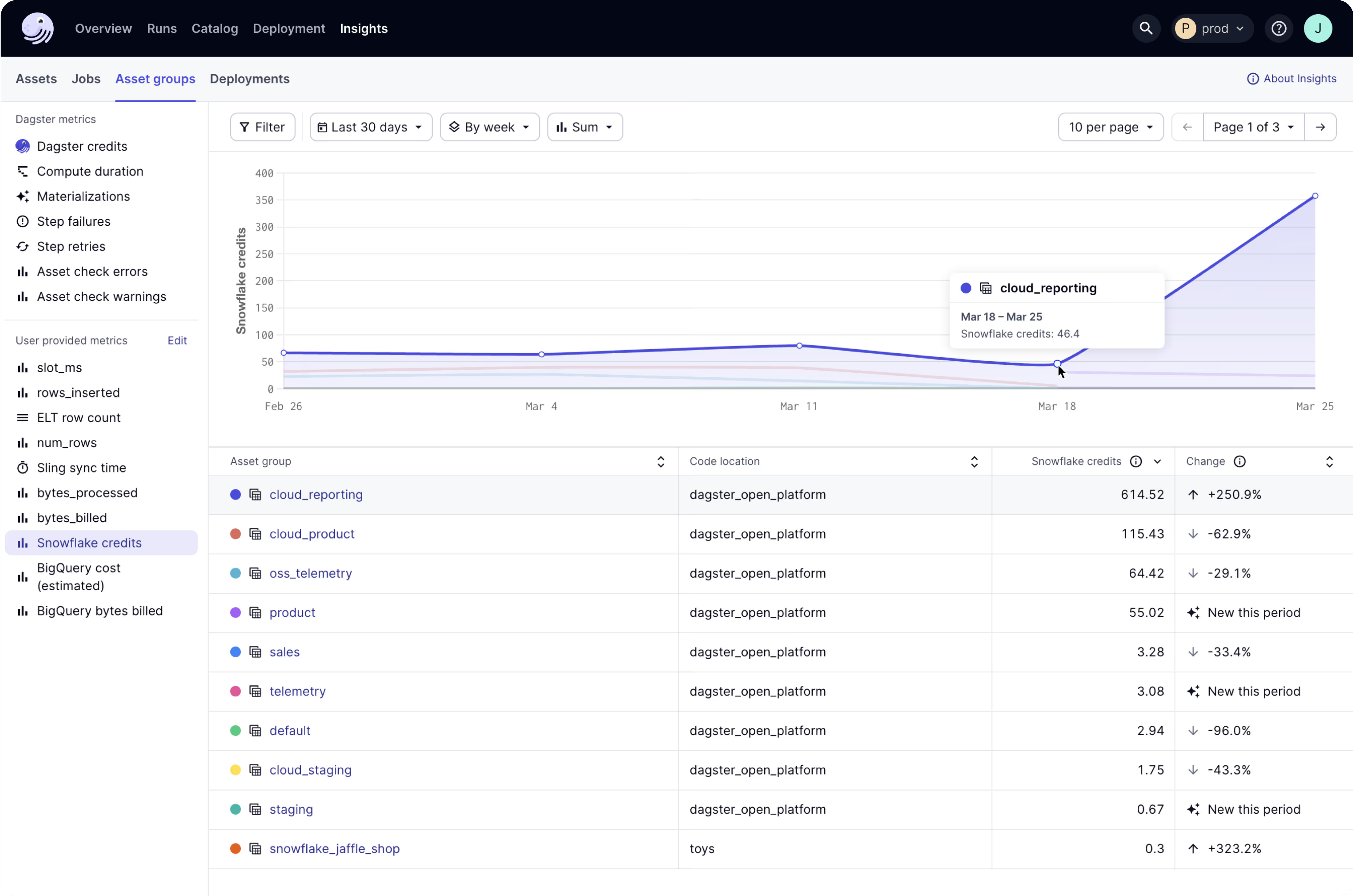

Achieve end-to-end observability

Modern pipelines demand modern visibility. With Dagster+ Pro you get unified, column-level lineage, real-time asset health and metadata and dashboards to monitor run costs and performance trends. No more black boxes and data silos.

Build a scalable AI strategy

AI starts with data. Dagster+ Pro is the orchestration backbone for your AI stack. From ingestion to ML model retraining, empower more stakeholders so your data platform can become an engine for innovation.

Flexible deployment that fits your architecture

Every enterprise has its own flavor of complexity, and Dagster's architecture is designed to adapt, not dictate. Whether you're fully cloud-native, hybrid, or somewhere in between, Dagster+ Pro gives you the control and flexibility to deploy in a way that fits your world, not ours.

Run Dagster on your cloud or ours

Support for hybrid and multi-tenant architectures

API-first design for easy integration into existing workflows

Scales with your team, infra, and compliance needs

Enterprise-grade security, no shortcuts

Security isn’t something we slap on at the end — it’s baked into everything we do. Dagster+ Pro gives you the confidence to scale with peace of mind, backed by the policies, certifications, and controls your team expects.

SOC 2 Type II, HIPAA, and beyond

We’re independently audited and aligned with the standards that matter most - including SOC 2 and HIPAA.

Encryption that means business

Data is protected with TLS 1.2+ in transit and AES-256+ at rest, because shortcuts don’t belong in security.

Access controls that stay out of your way

SSO, RBAC, and SCIM provisioning make it easy to manage users and permissions without breaking your flow, with support for Google, Github, and SAML IdPs.

Audit logs and retention policies

Track all activity and changes made to the system with a unified view of all user actions.

Real humans, real help, really fast

When something breaks at scale, the last thing you want is to fill out a form and wait. With Dagster+ Pro, you get support that feels like an extension of your own team. Personalized onboarding support, a dedicated Slack or Microsoft Teams channel, and premium support with priority response times mean you're never flying solo. Whether it's configuration help or a quick sanity check, you're talking to real engineers who actually understand your setup.

Ready when you are

If your current orchestration setup feels like a bottleneck, you’re not alone. Dagster+ Pro helps enterprise teams ship faster, recover from failures quicker, and build platforms that scale with ambition. Talk to our team and see what’s possible.

Trusted by Data Teams. Built for Scale. Ready for You.

.svg)

.svg)

.svg)

.svg)

.svg)

.svg)

.svg)