Your platform for AI and data pipelines.

Dagster is a unified control plane for teams to build, scale, and observe their AI & data pipelines with confidence.

Trusted by teams building modern data platforms, worldwide

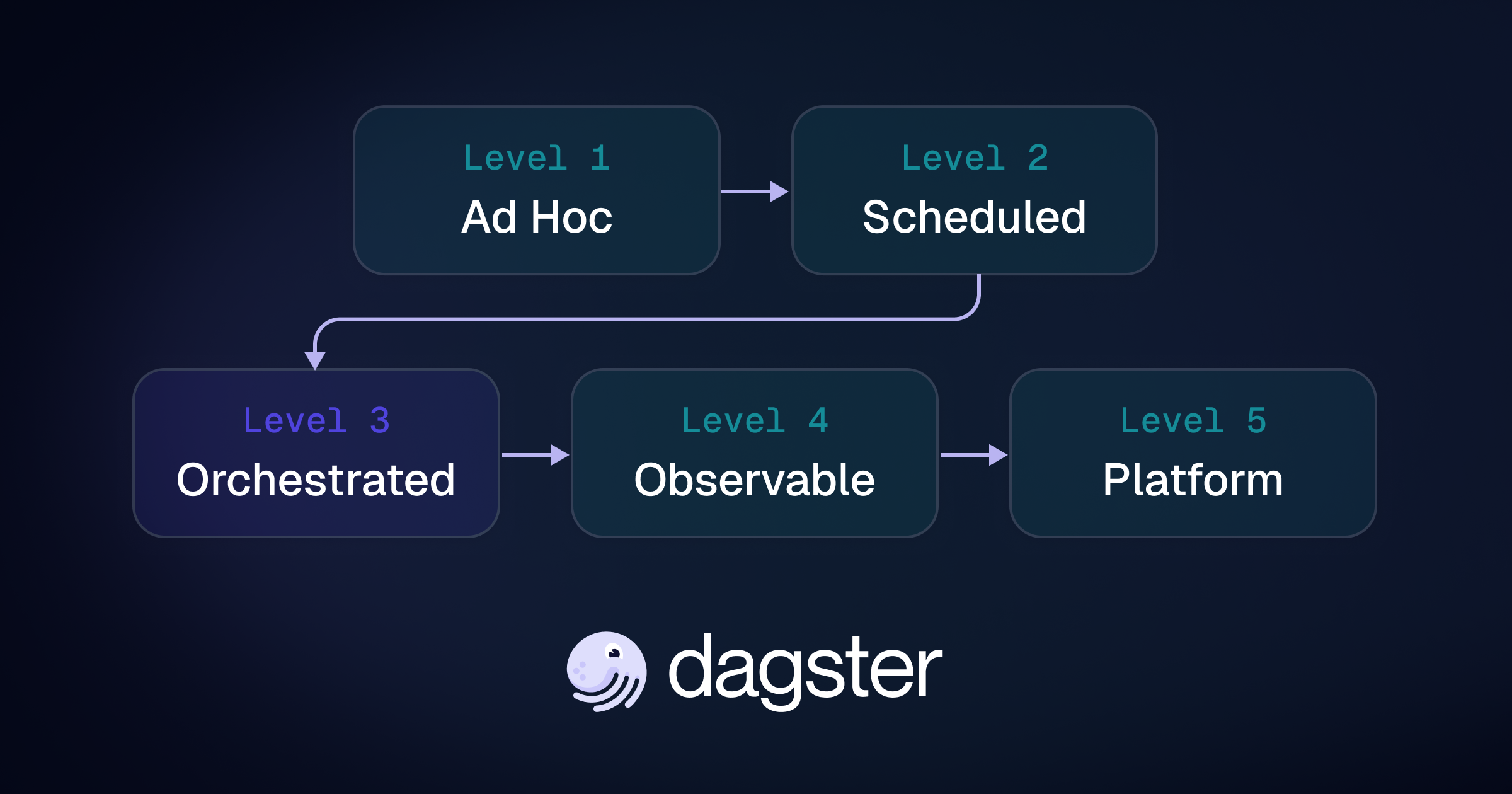

Battle tested data orchestration

A unified platform built to run your most critical data flows, from pipelines and transformations to full-scale AI and ML operations.

ETL & ELT Pipelines

Build reliable pipelines to move data from SaaS apps and APIs to warehouses like Snowflake or BigQuery.

Data Transformation

Orchestrate dbt, Databricks, or Python transformations to produce clean, modeled data that powers analytics and BI.

AI & ML Workflows

Accelerate ML development with pipelines that streamline data prep, model training, and experiment tracking.

Integrated observability

See everything, catch issues fast, and keep your data trusted with built-in lineage, alerting, and real-time health metrics.

Data Catalog & Lineage

Empower teams to discover and understand datasets with clear ownership, lineage, and auto-generated documentation that stays current.

Monitoring & Alerting

Stay ahead of data incidents with intelligent alerts in Slack, and streamlined resolution workflows with AI-powered debugging and impact analysis.

Realtime Health Metrics

Track freshness, performance, costs, and reliability to keep pipelines healthy and stakeholders confident in their data.

Activate your data with Compass

Turn warehouse data into instant, trustworthy answers for every stakeholder, right inside the tools they already use.

Data-driven Decisions in Seconds

Give your stakeholders instant access to business insights inside the tools they already use, without waiting for reports or dashboards.

Unlock the Power of Your Warehouse

Compass understands your unique business context and answers common business questions with real data from your warehouse.

Governed by the Data Team

Your analysts and data engineers guide Compass behind the scenes with GitOps — so answers stay trustworthy.

Enterprise ready

Roles & permissions

We offer SSO, RBAC and SCIM provisioning, with support for Google, Github and SAML IdPs.

SOC 2 Type II, HIPAA and beyond

We’re independently audited and aligned with the standards that matter most.

Flexible deployment options

Run Dagster on your cloud or ours, with supprt for North American and European regions.

Multi-tenant instances

Keep your code and data isolated with multi-tenant code deployments.

Audit logs and retention policies

Track all activity and changes made to the system with a unified view of all user actions.

Enterprise support

Get dedicated support from our team of Dagster experts.

Ship data and AI products faster.

Automate, monitor, and optimize your data pipelines with ease. Get started today with a free trial or book a demo to see Dagster in action.

Trusted by data teams.

Built for scale.

Ready for you.

“Dagster has been instrumental in empowering our development team to deliver insights at 20x the velocity compared to the past. From Idea inception to Insight is down to 2 days vs 6+ months before.”

“Somebody magically built the thing I had been envisioning and wanted, and now it's there and I can use it.”

"We would not exist today as a company if we didn't move to a single unified codebase, with a real data platform beneath it."

"Dagster is easy to use, it's ELT friendly, can integrate with the main modern tools out of box and allows you to automate whatever you want wherever it is."

.jpg)

.png)

.jpg)

.png)