Use Dagster’s External Assets feature for data observability, lineage, data quality, and cataloging while bringing your own orchestration and scheduling.

Today we’re announcing External Assets, available in open-source and in Cloud. External Assets enable Dagster to model data assets that are not scheduled and orchestrated by Dagster. The primary goal of External Assets is to enable the adoption of Dagster as a “single pane of glass” for a data platform without requiring a wholesale migration of all scheduling and orchestration infrastructure in that platform.

Context

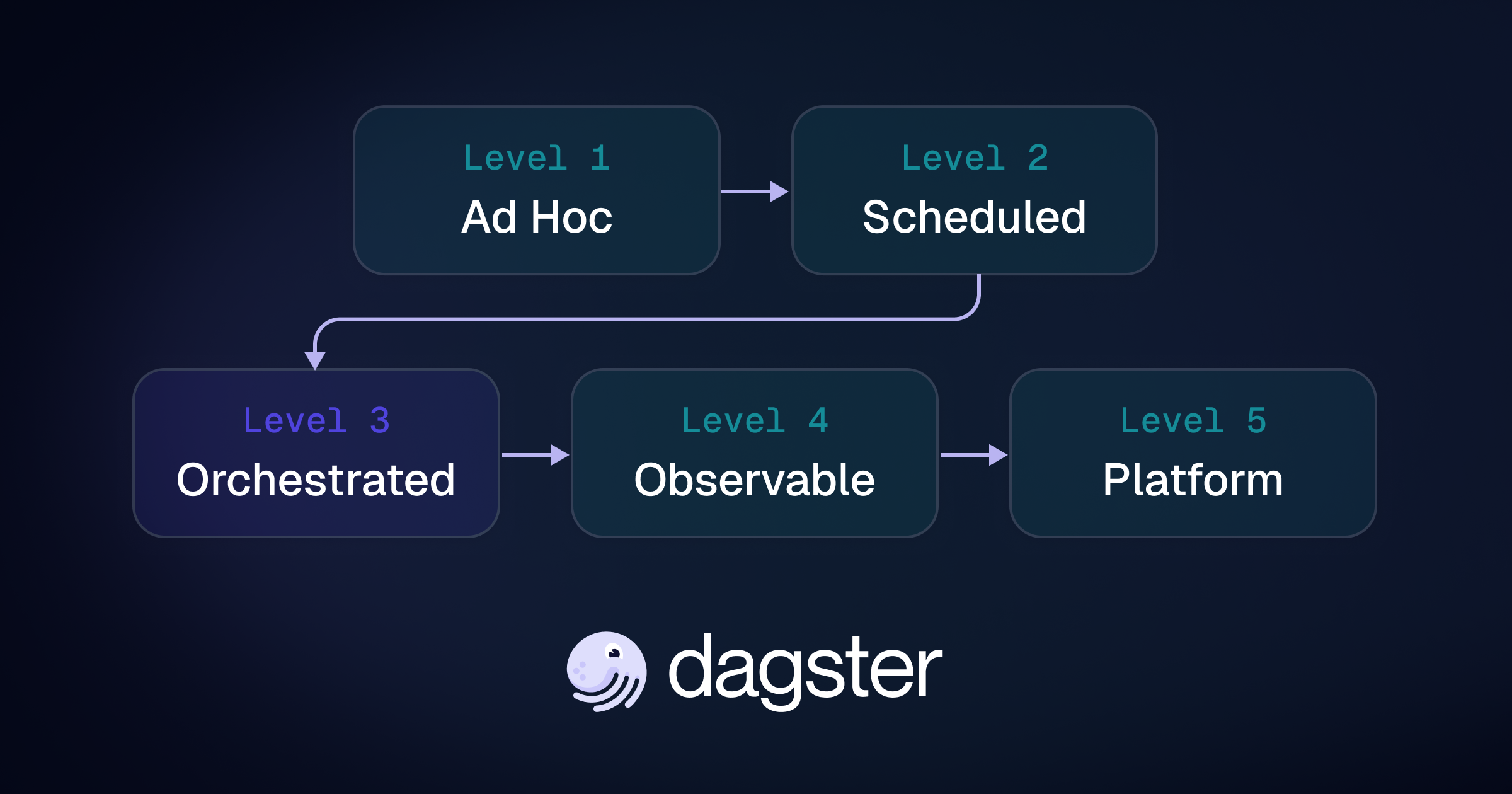

Dagster is traditionally categorized as an orchestrator. However, in our previous post announcing Pipes, we described how Dagster is actually three layered systems:

- A metadata platform for lineage, observability, and data quality;

- An orchestrator; and

- A Python framework for business logic and data transformations.

Pipes integrations provide a clean separation between the orchestration and data transform layers. External assets, in a similar fashion, provide a clean separation between orchestration and the metadata layer.

Taken together, these two features make Dagster a the most flexible, composable, and powerful solution for building an organization’s data platform control plane.

Metadata

The data structure that drives Dagster’s metadata layer is the event log: an immutable log of events that serves as a ledger of all activity on the data platform. When Dagster orchestrates a computation, it automatically adds structured events to this log out of the box. This structured event log is the system of record of the data platform, and drives Dagster’s operational, cataloging, alerting, and data quality tools.

We've received consistent feedback from users that they wish they could leverage the power of the event log and its associated tooling without using Dagster as the orchestrator. The reason is not because Dagster is not valuable as an orchestrator. Far from it. Those users have typically already adopted Dagster for greenfield use cases because they love its orchestration capabilities.

What happens is that they soon rely on Dagster for not just scheduling, but observability and operations as well, getting enormous value out of the system. Based on the experience and the quality of the tools, they want to get the rest of their existing platform and stakeholders into that system as quickly as possible.

However, the rest of their platform already uses a wide range of formal and informal orchestration solutions. The idea of synchronously migrating all of that infrastructure is untenable. Without the migration, they are still left with a siloed control plane. It’s just that one of the silos is much better than the others.

External assets provide a solution for exactly this situation. Teams can migrate to Dagster for lineage, observability, and data quality without migrating any scheduling or orchestration infrastructure.

How does it work?

It works by allowing users to:

- First define their asset specifications––structure, lineage, and metadata––in a declarative fashion within Dagster.

- Then use an API to inject events into the event log attached to those assets.

Declaring your Assets

You can declare an asset graph with specifications, which are just metadata with no associated materialization function.

These assets are viewable within Dagster, but cannot be materialized.

Attaching Events to Those Assets

You can attach events to these using APIs. There are three primary ways to do this: a REST API, a Dagster Sensor, or our Python API.

REST API

The primary tool for attaching events to External Assets is the REST API. A user can, with just a couple lines of code, call these from their existing code and systems in order to start using Dagster’s metadata capabilities.

Using Dagster Sensors

Sometimes, as a user, you want to track the metadata based on activity in another system, but you cannot or do not want to modify that system directly to push metadata to you. In those cases, you want instead pull metadata via a polling mechanism

Dagster already has a construct, sensors, designed to periodically run lightweight computations. Traditionally these have been used to monitor external state changes and kick off runs based on those changes (e.g. kick off a run whenever a new file appears in a specified s3 bucket). Now these can be repurposed for another end: to poll an external system and insert events into the event log based on that external system’s state.

Using a Python API

Lastly we have a Python API, so that users can insert metadata events into Dagster directly from Python scripts. This is useful for backfilling data, ad hoc operations, and other use cases.

What is the value?

A fair question is why do this at all? Here are a few example of the value of using external assets:

- System of record for metadata: You can use Dagster for your system of record for both definition-level and runtime metadata.

- Alerting: Dagster has built-in alerting that responds to event log events. External assets can leverage this.

- Cross-cutting data lineage: You can track the lineage of data assets as they cut across orchestrators and schedulers.

- Data Quality: You can track the results of data quality checks for external assets.

- Scheduling. You can schedule downstream computations based on events in the event, either with auto-materialize policies or vanilla sensors.

Learn more about External Assets in the Dagster Docs.

Conclusion

External assets allow for adoption of Dagster’s metadata capabilities independently from its orchestrator. You can use Dagster for lineage, observability, data quality, alerting and other capabilities without migrating scheduling and orchestration infrastructure.

We still believe that over time, organizations that adopt External Assets will eventually also migrate the scheduling and orchestration infrastructure to Dagster. However external assets provide an incremental pathway for getting there, with intermediate milestones that deliver tangible benefits to users at every step in the journey. It relieves pressure on the data platform, allowing them to do infrastructure migrations at a time and pace of their choosing, while still enabling them to deploy Dagster’s active metadata universally across the organization.

.jpg)

.png)

.jpg)

.png)