Elementl CEO Pete Hunt shares the three priorities that guide how we will evolve Dagster.

We didn't start the Dagster project simply to make a great orchestrator. Our plan is to accelerate the adoption of software engineering best practices by every data team on the planet.

Data and ML engineering drive important decisions that influence billions of people and trillions of dollars worldwide, and all too often, the data pipelines backing these decisions are held together with duct tape and chewing gum. Furthermore, engineering teams are drowning in both technical and organizational complexity found inside modern data-driven organizations.

We believe that embracing software engineering best practices is the only way for these teams to move faster and maximize quality and have happier developers.

As orchestration sits at the center of the increasingly-complex data platform, it is the natural place for us to drive this change.

So far, we have delivered an orchestrator that has the reputation of having a great (the best?) developer experience due to our innovative core programming model (Software-defined Assets), superior CI/CD capabilities, and first-class local development and testing. However, we have much more work to do.

Nick Schrock, our founder and CTO, recently shared his perspectives on the state of organizational complexity, and Dagster’s role in helping to tame it. In the spirit of open-source and transparency, I would like to lay out what’s next for Dagster and the company behind it.

Priority #1: Flatten the learning curve

Dagster has a reputation for being extremely powerful. However, the learning curve is still too steep.

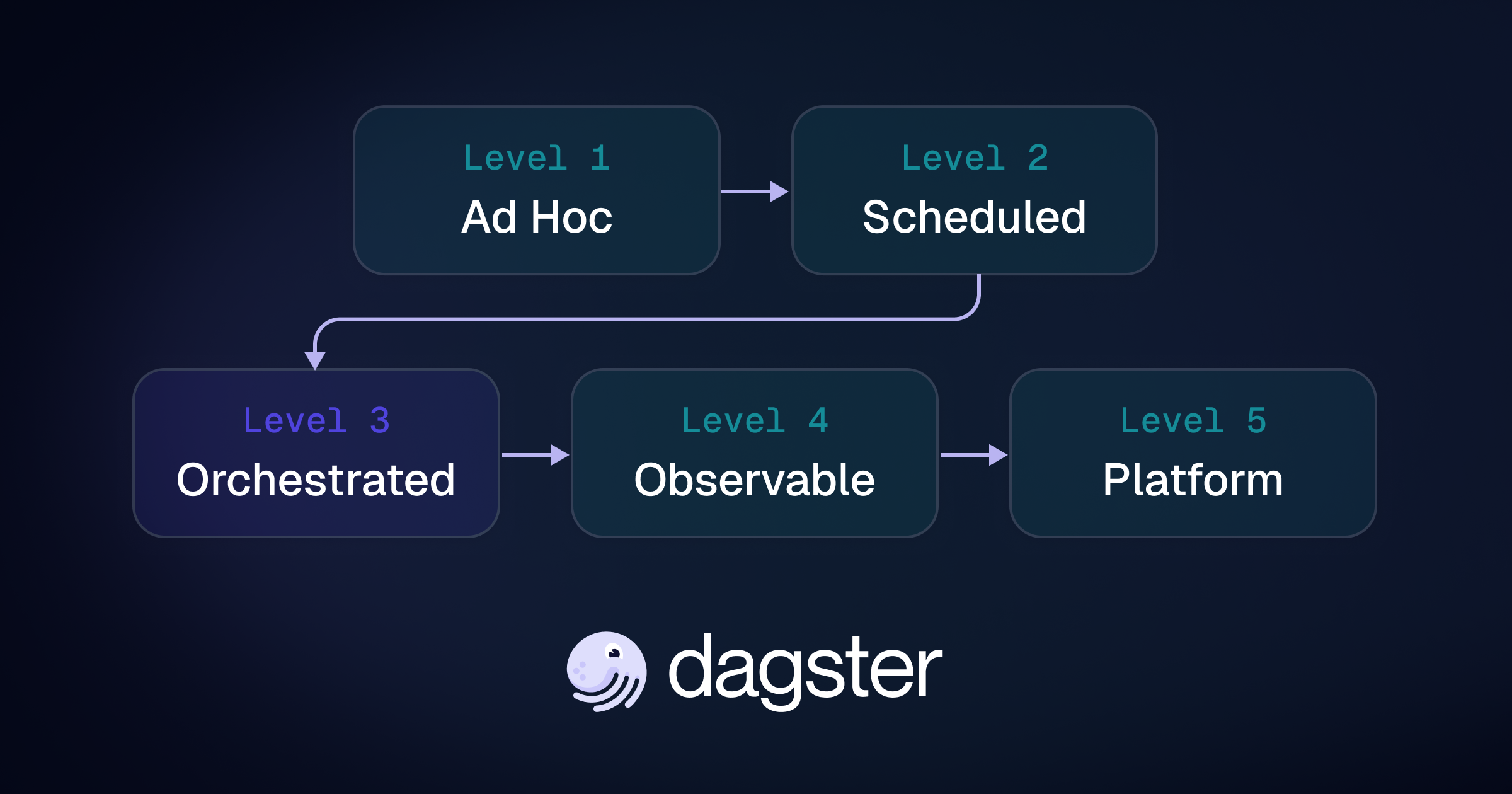

One reason for this is that Dagster is actually three things rolled into one: it’s a scheduler, an operational asset catalog, and a data transformation tool. We believe that these are naturally all part of the orchestrator and that this will become more obvious over time as the category matures. However, in the short term, this means that there are a lot of different concepts to learn. Historically we have introduced them all at once, which has been challenging for some newcomers.

The good news is that most people don't need to learn most of these concepts in order to be productive with Dagster. And when you do need to reach for these concepts, they layer in nicely with the rest of the system.

Over the next few quarters, we're going to focus our efforts on creating a streamlined onboarding experience. This means we're going to emphasize a subset of our features focused on Software-defined Assets. Additionally, we're going to make improvements to these essential SDA features to ensure they can scale to the broad set of needs of our users and are easy to reason about for those who are just getting started with Dagster. I want to emphasize that these will be primarily small fixes and polish work; we don't anticipate any major deprecations or fundamental changes to the system.

Priority #2: Evolve the orchestration category

One innovation that we've brought to orchestration is a unified asset graph that combines lineage, metadata, and operational history into a single system of record. We call this an operational asset catalog, and it's critical to our long-term strategy and differentiation.

We have only scratched the surface of the value that this can provide. As more metadata accrues to this graph, we can provide a ton more value to users.

- Consumption management. We can keep track of the resources that are consumed every time an asset is materialized. Not only can we report on and monitor costs, we can put proactive guardrails in place to ensure that teams don't accidentally consume more resources than budgeted.

- Data quality. Improved observability and monitoring will help data engineers ensure that their data pipelines are delivering high-quality data. Additionally, incorporating data quality checks into the orchestrator's decision-making will help prevent bad data from spreading down the asset graph.

- Sandboxes. We can build a “forkable” version of the asset graph (like “git branch” for data assets). When combined with Dagster's branch deployments and branchable storage layers like Snowflake’s zero-copy clones or LakeFS, this will allow data engineers to create "copy on write" staging environments for every pull request that read from production and write to staging. This will massively improve developer velocity, especially for ML model development

- Programmatic governance. We can integrate governance constraints directly into the orchestrator, ensuring that properties like data sovereignty and retention are programmatically enforced across the whole organization.

Priority #3: Accelerate our early commercial success

We are a venture backed open-source company, so naturally, we often get questions about how we prioritize open-source vs commercial work.

Fundamentally, the success of the Dagster OSS project is prerequisite to the success of our commercial offering. Our strategy is to build a kick-ass, category-redefining orchestrator that, over time, becomes the standard. On top of that strong foundation, we're building a company that sells a hosted version of Dagster with features that larger organizations would otherwise need to build themselves.

We have been selling Dagster+, our commercial product, for a little under a year, and our commercial traction validates our strategy. We're confident that if we're successful in evolving the orchestration category and establishing a new standard, we can build a great, venture-scale company which in turn can continue to build an amazing open-source project.

tl;dr

In essence, our master plan is as follows:

- Make Dagster both increasingly powerful and easier to use for all data practitioners.

- Integrate new types of metadata into the operational asset catalog.

- Deliver features above and beyond what's currently considered “orchestration” by leveraging this metadata.

- Roll out paid versions of these features with extra capabilities for teams and enterprises like access control, auditing, etc.

- Keep doing this until software engineering best practices are widely adopted by every data team in the world.

We look forward to hearing your reactions to this. Join our community and be part of the conversation.

— Pete

.jpg)

.png)

.jpg)

.png)