Expanding Dagster pipes to support Typescript, Rust, and Java

Why Expanding Dagster Pipes Matters

At Dagster, we understand that data teams work with diverse technology stacks. Its why we built Pipes, a standardized interface to launch code in external environments with minimal additional dependencies. Pipes maintains full visibility through parameter passing, streaming logs, and structured metadata making it particularly powerful for incremental adoption.

With the help of our community we’ve expanded our pipes availability to includeTypeScript, Rust, and Java. With these new implementations, you can now:

- Leverage Dagster Pipes in more ecosystems: If your team is working with TypeScript on a Node.js backed, Rust for high-performance data processing, or Java for enterprise-scale applications, you can now integrate seamlessly with Dagster.

- Standardize orchestration across your stack: No need to rewrite logic in Python—just use the language that makes the most sense for your project.

What’s New: Pipes for TypeScript, Rust, and Java

TypeScript

Dagster Pipes for TypeScript brings orchestration capabilities to TypeScript. Now, backend teams working in the Node.js ecosystem can integrate their processes into Dagster with ease.

Rust

Dagster Pipes for Rust is perfect for teams that require high-performance, memory-efficient data processing. With Rust’s growing presence in the data ecosystem, this implementation ensures safe, concurrent, and lightning-fast pipeline execution.

- Great for data-intensive workloads where performance is key.

- Provides Rust’s strong memory safety guarantees.

- Ideal for processing large-scale analytics workloads.

Java

Dagster Pipes for Java brings orchestration to one of the most widely used enterprise languages. Java-based data platforms, machine learning pipelines, and legacy applications can now seamlessly integrate with Dagster’s orchestration framework.

- Essential for teams working in enterprise environments.

- Ensures compatibility with JVM-based data ecosystems.

- Supports large-scale, mission-critical workflows.

Typescript example:

In your Typescript project, import @dagster-io/dagster-pipes from npm and then you can pipe information back to your Dagster context.

// main.ts

import OpenAI from 'jsr:@openai/openai';

import * as dagster_pipes from '@dagster-io/dagster-pipes';

using context = dagster_pipes.openDagsterPipes()

const client = new OpenAI();

const response = await client.responses.create({

model: 'gpt-4o',

instructions: 'You are a coding assistant that talks like a pirate',

input: 'Are semicolons optional in JavaScript?',

});

// [optional] return structured logs to Dagster

context.logger.info(response.output_text);

// [optional] send metadata to Dagster and report an asset materialization

context.reportAssetMaterialization(

{

"openai_model": response.model

}

)

On the Dagster side, you simply have your asset return the PipesSubprocess Client.

import subprocess

from pathlib import Path

import dagster as dg

@dg.asset(

)

def example_typescript_asset(

context: dg.AssetExecutionContext,

pipes_subprocess_client: dg.PipesSubprocessClient

) -> dg.MaterializeResult:

external_script_path = dg.file_relative_path(__file__, "../main.js")

return pipes_subprocess_client.run(

command=["node", Path(external_script_path)],

context=context,

).get_materialize_result()

defs = dg.Definitions(

assets=[example_typescript_asset],

resources={

"pipes_subprocess_client": dg.PipesSubprocessClient()

},

)

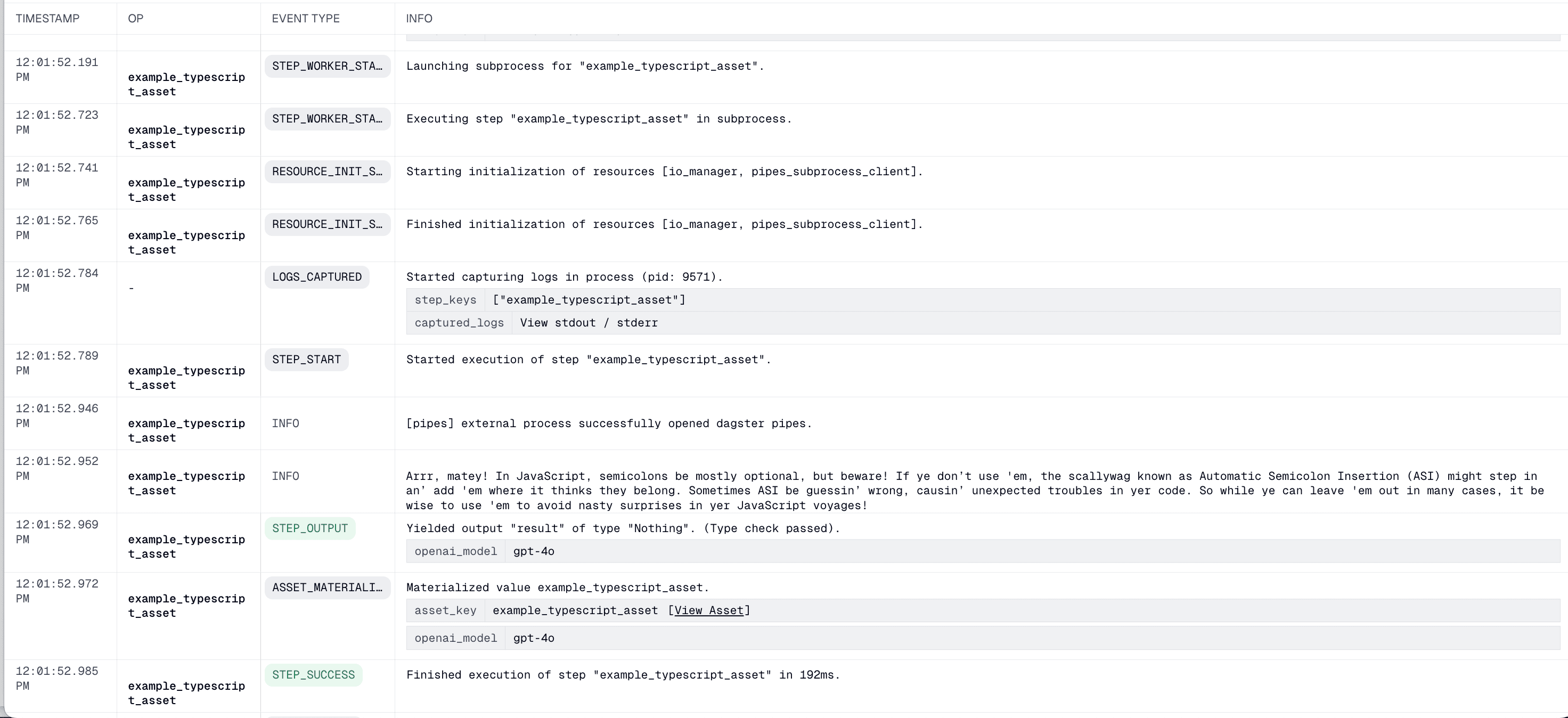

And you’ll get an output like this :

Getting Started

Ready to integrate Dagster Pipes into your TypeScript, Rust, or Java projects? Check out the documentation for each implementation and start orchestrating today:

- TypeScript: Read the Docs

- Rust: Read the Docs

- Java: Read the Docs

Let us know how you’re using these new implementations by joining the Dagster community, and if there’s a pipes implementation you’d like to see let us know on Github or Slack. We’d love to collaborate with you.

.jpg)

.png)

.jpg)

.png)