AI agents that only understand business definitions without knowing whether the underlying pipeline actually succeeded are confidently wrong and operational context from the orchestrator is the missing piece.

Atlan is hosting Activate on April 29, where it will make major announcements about the Enterprise Context Layer. Dagster is proud to be a Context Layer Partner. To learn more about the Dagster + Atlan integration, check out our integration docs.

There's a gap in how the industry talks about making AI agents enterprise-ready. Most of the conversation focuses solely on semantic context: business definitions, glossary terms, metric logic, and governance rules. This makes sense. An AI agent that doesn't know the difference between "net revenue" and "gross revenue" is going to produce bad answers.

But semantic context is only part of the picture. There are other dimensions that get far less attention, but are just as critical: data context (lineage, quality signals, technical metadata), knowledge context (institutional rules, edge cases, decision history), governance context (policies, sensitivity, access controls), user context (who’s asking and why), and our focus in this article, operational context. Operational context is the runtime reality of your data platform. Did the pipeline that produces net_revenue actually run today? Did it succeed? When did it last materialize? What upstream dependencies fed into it, and are they healthy?

These are questions only an orchestrator can answer. And right now, most AI agents can't ask them.

Multiple Kinds of Context, One Blind Spot

Think about how an experienced data engineer evaluates whether a number is trustworthy. They don't just check the definition. They check the pipeline, the users, and the data itself. They look at the orchestrator to see when the asset last materialized, whether the run succeeded, and whether any upstream sources are lagging. They have the tribal knowledge to spot any exceptions or anomalies. They have a mental model of the platform's operational state that informs their judgment.

AI agents don't have that mental model. They query a warehouse and get a table. They query a catalog and get a definition. Both are valuable. But neither tells them whether the data they're looking at is fresh, complete, or the product of a healthy pipeline run.

This creates a specific failure mode: the agent that gives a confident, well-defined, semantically correct answer based on stale or broken data. The definition is right. The number is wrong. And nobody knows until someone downstream gets burned.

Where Operational Context Comes From

Operational context is generated by the systems that actually execute your data workflows. It includes:

Materialization events. Did this asset get produced? When? Was it a full refresh or a partition update? Did it succeed or fail?

Dependency graphs. What upstream assets feed into this one? If a source table lags, every downstream asset may be affected. That's not something a catalog knows on its own.

Freshness signals. Is this asset within its expected SLA? A table that refreshes daily but hasn't been touched in 36 hours is a problem, but only if you know what "on time" looks like for that asset.

Run metadata. Run IDs, timestamps, partition keys, retry counts, error messages. This is the operational provenance that tells you not just what the data is, but what happened to produce it.

This metadata is ephemeral by nature. It's generated at runtime by the orchestrator. If you don't capture it somewhere durable and expose it alongside your semantic metadata, it disappears, and your context layer has a hole in it.

Why Asset-Centric Orchestration Changes the Equation

Not all orchestrators produce the same quality of operational context. Task-based systems know that "Job 47 ran at 3am." That's useful for debugging, but it doesn't connect cleanly to the data assets your AI agents and analysts actually care about.

Dagster takes a different approach. Every data asset is a first-class citizen in the system, with its own materialization history, freshness expectations, dependency relationships, and quality check results. This isn't just a different UI. It's a fundamentally richer metadata model.

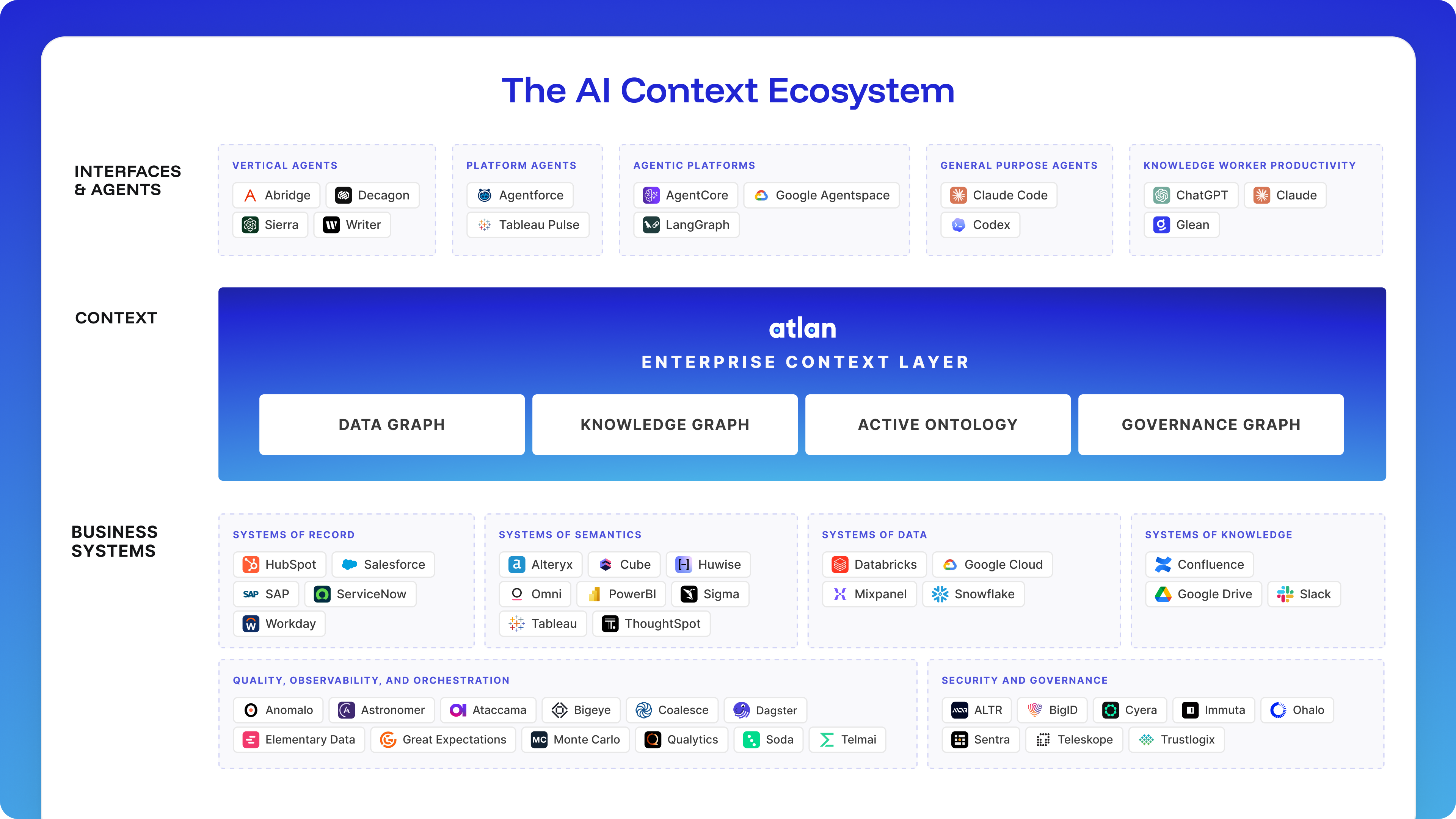

When a Dagster asset materializes, the system knows exactly what was produced, what produced it, what it depends on, and whether it met its quality and freshness criteria. That's structured operational context, and it maps directly to Atlan’s Enterprise Context Layer. The operational context, along with business definitions, lineage, quality signals, governance policies, and the full data graph, gives AI agents everything they need to act with confidence.

The Context Layer Has To Be Open and Operational

A context layer that only captures what data means is incomplete. For AI agents to act reliably on enterprise data, context has to be open and operable, meaning it reflects the live state of the data estate, not a stale snapshot.

Orchestrators are context producers. Every time Dagster materializes an asset, it generates a piece of context that belongs in the Enterprise Context Layer: which pipelines ran, when, whether they succeeded, what they produced, and how upstream dependencies behaved. That operational context — alongside business semantics, ontologies, governance policies, and decision traces — is what gives AI agents the complete picture they need to reason correctly. Without it, an agent can know what a metric means but not whether it's current. That's not enough for production.

Dagster pushes operational context into Atlan's Enterprise Context Layer to provide the context enterprises actually need at inference time. Materialization events, run status, asset lineage, partition keys, freshness signals, and custom tags flow into Atlan in real time as assets materialize or fail, with no batch sync or manual export needed. Atlan unifies that operational signal with the full Enterprise Context Graph: business semantics, ontologies, ownership, governance policies, sensitivity classification, and the decision traces that explain why data is governed the way it is.

Neither system alone gives AI agents the full picture. A context layer without operational signals can tell an agent what a metric means but not whether it's current. An orchestrator without a context layer can tell you a pipeline succeeded but not what the output represents in business terms.. The value is in the combination.

The Dagster + Atlan integration makes this concrete. Dagster streams operational metadata into Atlan's context layer in real time: materialization events, run status, asset lineage, partition keys, timestamps, descriptions, and custom tags. As assets materialize (or fail), events flow automatically. No batch sync, no manual export. Atlan unifies Dagster’s operational signals with the full Enterprise Context Graph, and serves the combined context to every AI tool you run via MCP.

This means that when an AI agent or a human analyst queries an asset in Atlan, they see the full context, its business definition, column-level lineage, data quality signals, governance policies, sensitivity classification, and ownership – alongside its operational state. They know what the asset means, where it came from, whether it passed its quality checks, who’s allowed to use it for what purpose, and that it was last materialized at 6:14am with all upstream dependencies healthy. That's a context layer with actual depth.

This Gets Harder as Unstructured Data Grows

So far, most of the context layer conversation has been grounded in structured, warehouse-native data: tables, columns, metrics, and dbt models. But the share of enterprise data that's unstructured is growing fast. PDFs, images, transcripts, embeddings, and documents are fed into RAG pipelines. AI workloads are pulling from sources that don't have neat schemas or well-established catalog entries.

This makes operational context even more important. When an AI agent reasons over a vector store populated by an embedding pipeline, there's no column-level lineage to fall back on. There's no glossary defining what "quarterly_earnings_chunk_47" means. The questions that matter are operational ones: When was this data last ingested? What source documents were processed? Did the embedding pipeline complete, or did it fail halfway through and leave the index in a partial state?

The data platforms that can track these workflows end to end, across structured and unstructured sources, and produce useful metadata at every step are the ones that will keep the context layer honest as AI workloads expand. An orchestrator that treats every asset the same way, whether it's a Snowflake table, a dbt model, or a vector database containing processed documents, provides your context layer with a single, consistent source of operational truth. That consistency matters more as the stack gets more heterogeneous, not less.

Context Layers Need Operational Depth

The teams that successfully move AI from pilot to production will be the ones that treat context as infrastructure, not an afterthought. Semantic definitions tell agents what data means. Operational signals tell them whether to trust it right now.

Orchestrators are uniquely positioned to provide that signal. No other system in the data stack has the same view into what ran, what succeeded, what failed, and what's at risk. By streaming that context into a unified layer alongside business definitions, governance rules, and lineage, you give AI agents the full picture they need to act reliably.

The context layer conversation has rightly centered on business meaning. It's time to bring operational reality into it.

.jpg)

.png)

.jpg)

.png)