Artemis built a data platform around Dagster+ to bring consolidated reporting to the $2.5T Cryptocurrency markets.

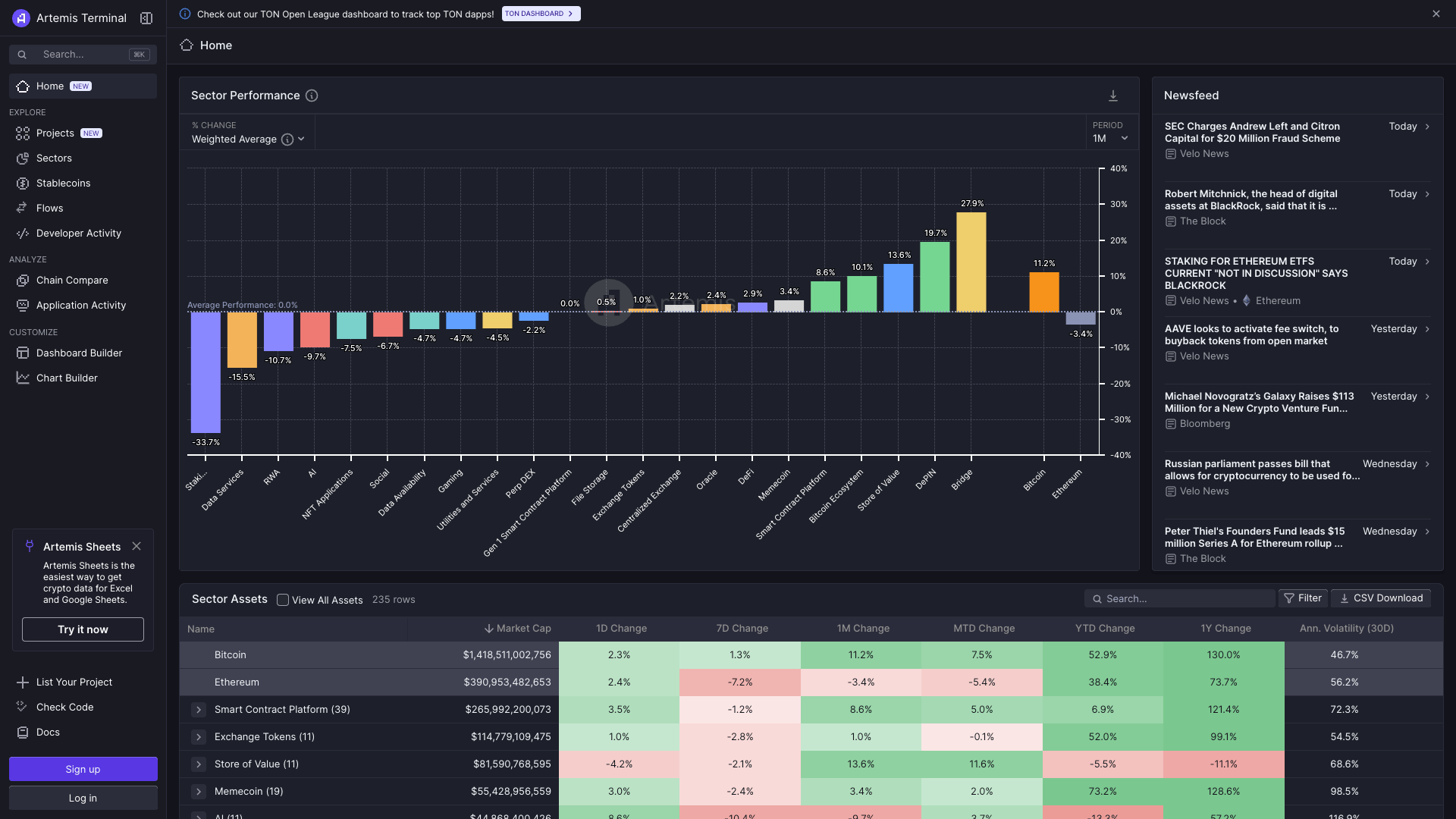

We explore how Artemis, a premier institutional data platform for digital asset fundamentals, leverages Dagster+ to serve thousands of customers, from financial institutions to individual investors and crypto protocols.

We got to chat with Alex Kan, Software Engineer at Artemis, and a member of the 12-person team, half of which are heavy Dagster users, including data engineers and data scientists.

In 2008, a paper was published called "Bitcoin: A Peer-to-Peer Electronic Cash System," by Satoshi Nakamoto. Since then the world has witnessed the wild and sometimes rocky ascent of cryptocurrency.

From its early days on the fringes of the digital economy, crypto has established itself as a legitimate and significant part of the global financial system. As of 2024, there are over 22,000 different cryptocurrencies in existence, of which 9,124 are considered active [1]. The total market capitalization for the cryptocurrency sector now exceeds $2.5 trillion USD.

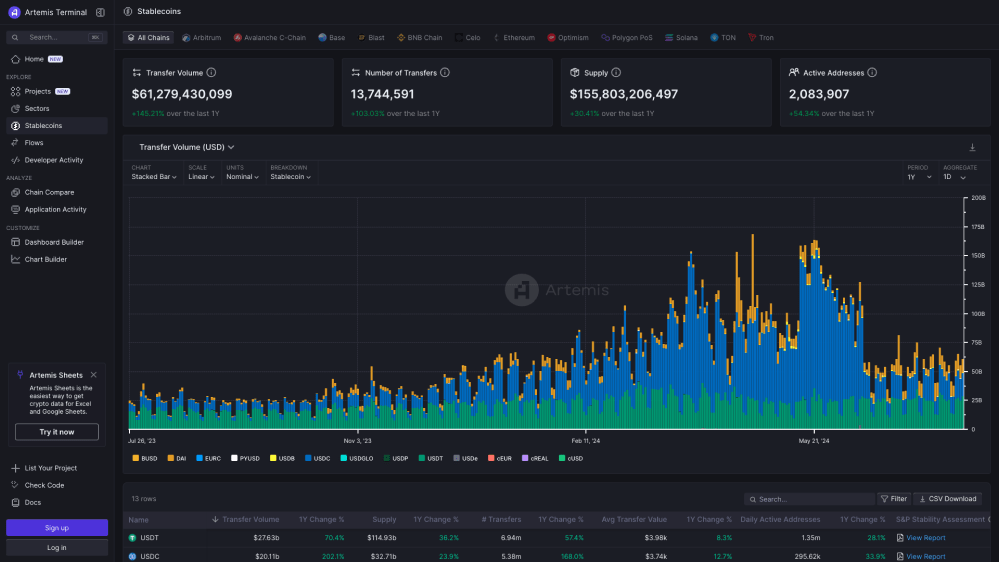

Stablecoins have also seen massive growth, with transfer volume peaking at over $150B a day in May 2024.

The cryptocurrency protocol stack generates a lot of public (onchain) data, but accessing that data in a consumable fashion is quite complex. There is no public repository of fundamental metrics like you might find for a centralized exchange like the NASDAQ or the NYSE.

And unlike traditional capital markets, which have structured quarterly earnings reports, crypto, makes real-time reporting possible. Anybody can do diligence on a protocol's financials by accessing the public blockchain.

Blockchain nodes are the backbone of blockchain networks, responsible for validating transactions and maintaining the blockchain ledger. This has made it easier to participate in the cryptocurrency ecosystem, , but financial reporting is still complex.

But indexing the raw data from blockchains like Ethereum and Solana is still complex. These datasets are often dozens of TB in size.

An ecosystem of reporting firms has set about making real-time crypto data available to the masses. Artemis is one such crypto data provider. Think of it as Bloomberg for the crypto markets.

Artemis: “The Bloomberg of Crypto”

The Artemis team aggregates data from many blockchains in the crypto space and provides the cleansed, structured, aggregated data to a range of data consumers, including institutions in traditional finance, research analysts and crypto native investors at liquid token hedge funds, and retail investors. Artemis also works closely with the crypto protocols themselves who use their data in their workflows.

Artemis delivers its data through its Artemis Terminal, an Artemis API, and data shares on Snowflake. In addition, Artemis provides Excel and Google Sheets plugin integration to pull data directly into a spreadsheet.

Most equity research analysts or quants do not have data engineering backgrounds or a data platform team, so the service Artemis provides is highly valuable to them, making crypto data instantly consumable.

Currently, Artemis provides dozens of terabytes of data to several thousand customers. The larger blockchains have thousands of transactions per second (TPS), while smaller networks may only see a dozen TPS. The architecture of each chain also determines how big each transaction record is and what data gets logged.

The complexity is not only in the volumes of data, but rather the lack of standards across blockchains. Aggregating and benchmarking the data across providers takes a lot of data processing. Different chains provide different features (like smart contracts). And unlike traditional financial markets, there is no “market close”—crypto is traded 24/7/365.

Behind the scenes, Artemis taps into the indexing services that are now commoditized in the industry, with many providers who will run the blockchain nodes—the backbone of blockchain networks— as a service and index the data.

An MDS tech stack

Broadly speaking, Artemis leverages an MDS stack, favoring composable, often open-source technologies.

- Most of the data is ingested directly from Snowflake Shares, Snowflake’s marketplace for datasets from a variety of data providers

- Where data is ingested from other APIs or a node for an unsupported chain, this is done directly in Dagster

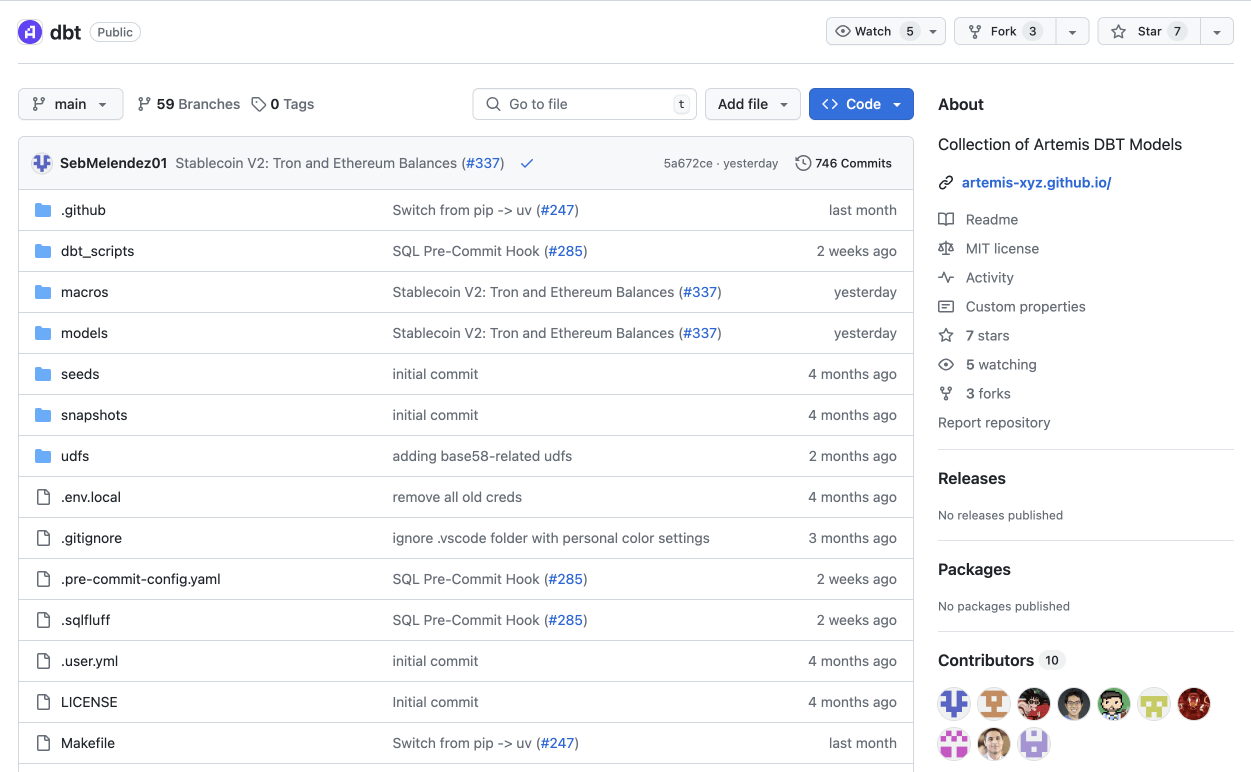

- Data modeling is done in dbt core

- Jobs run on AWS’s ECS service

- Hightouch is used for reverse ETL

- BigQuery is also used for some more niche data sources

- Hex is used for data sandboxing and reporting

- Django and Next.js then support the front-end app

This streamlined stack lets the team iterate and ship quickly across the entire application.

Moving off Apache Airflow

Artemis ran Airflow as a managed service for the first year of its existence. They chose to run it on MWAA because they were scrappy and were committed to AWS. However, the system fell short on reliability (due to the Airflow scheduler), and the non-engineers who contributed to the code base (such as data scientists and researchers) found the system hard to work with.

Anthony Yim, Co-founder and CTO at Artemis, further explains: “What was also tough is managing data lineages especially when a model failed to build. We would have to rerun the whole lineage rather than a specific asset.”

“Debugging failures was hard since there was no single pane of glass to view assets and statuses.In addition, the UI on Airflow often just didn't work or was very cryptic. Finally, as an organization that needed to step up its data pipeline game, we wanted to use DBT, and Airflow didn't have a tight integration with DBT tools (at least not back then).”

Another challenge of Airflow: the lack of a dbt integration.

It was clear that Airflow would not support the team's next stage of growth and was increasingly adding drag to their development cycles. The team looked for a more fit-for-purpose system that provided better lineage, tagging, and a more intuitive framework for pipelines.

Building on Dagster+

The Dagster framework, with its asset orientation, proved much more intuitive and a better fit.

“Software-defined Assets were a novel approach.” says Alex, “Also, not having to write custom Airflow Operators bc of Dagster's builtin I/O managers was huge.”

The tagging and built-in cataloging and lineage make data collaboration much smoother, and medium-code practitioners (people with a data science or analytics background and focus who nonetheless write code) found Dagster’s framework much more accessible.

Again, because they lack a full-time DevOps team, Artemis selected a hosted option in Dagster+, providing many enhancements around user management, cataloging, and cost observability.

While Alex joined the team after the transition, he had prior experience working on and contributing to Dagster and was able to rapidly bring new value to the team. He expanded the Dagster+ setup to multiple code locations and organized assets in groups for better scheduling and alerting.

Opening the black box: open source dbt models

Analysts and investors naturally need to trust the data they are working with. To address concerns and answer analyst questions about methodology, Artemis took the bold step of publishing the open-source code to their dbt models, which you can find here.

This approach of openness aligns with the culture of decentralized digital currency, and other projects have also openly shared their business logic, such as Dune’s Spellbook.

A platform for growth

Today the Artemis team has a streamlined data operation that will support their growth for the coming years, as digital assets continue to expand, become more mainstream, and continue to evolve. Whatever digital currency the world decides to adopt next, Alex and the Artemis team will be ready to help investors grasp the fundamentals and make informed investment decisions.

.jpg)

.png)

.jpg)

.png)