Why Is Data Lineage Important in dbt Projects?

Data lineage refers to the end-to-end tracking of data as it moves through systems, from its origin to its final destination. It maps the flow through every transformation, integration, and aggregation step, providing a detailed view of how data evolves. Data lineage answers questions about the sources, transformations, and usage of each dataset, making it possible to trace data anomalies back to their root causes.

Data lineage plays a critical role in dbt projects by giving data and analytics engineers the context they need to manage both upstream and downstream dependencies effectively. In dbt, where models are tightly coupled and changes in one can impact many others, lineage enables teams to prevent issues and respond quickly when problems arise.

3 Key Use Cases of Data Lineage in dbt

Here are some of the common ways data lineage is used in dbt projects:

- Understanding downstream impact before deploying changes. When modifying a core model, engineers can trace its dependencies to see what downstream assets—like tables, dashboards, or reports—might be affected. This supports impact analysis during deployment testing, helping teams evaluate the criticality of downstream components and avoid breaking high-priority business assets.

- Faster root cause analysis during incidents. If an issue appears, such as missing data or unexpected null values, lineage helps identify the upstream models causing the issue, as well as which downstream models or reports rely on the affected source. This enables teams to quickly take corrective action, assess who and what might be impacted, and notify stakeholders early.

- Aiding data discovery and data quality on the upstream side. When business users rely on dashboards for important decisions, they can use lineage to trace metrics back to their sources. This allows them to inspect how metrics are defined, what data is used, and whether the inputs are reliable and trustworthy.

In both development and business contexts, data lineage makes dbt projects more transparent, reliable, and easier to manage at scale.

How Data Lineage Works in dbt

Data lineage in dbt is built around the dependency graph, which automatically maps how sources, models, snapshots, metrics, and exposures connect. This graph is generated when you use functions like ref() and source(), and it provides a visual view of how data flows from raw inputs to final outputs. Each node represents a dataset or transformation, and edges show the dependencies between them.

Models are the core of this process. They are SQL or Python files that apply transformation logic to raw data. Staging models handle standardization and cleanup, while transformation models build on them to create business-ready datasets such as summaries or metrics. Clear naming and defined dependencies keep the lineage graph accurate and maintainable.

Snapshots extend datasets by capturing historical changes in source data. For example, a customer’s changing address is stored with timestamps, making it possible to reconstruct data states over time. Snapshots automatically integrate into the graph when referenced by models.

Metrics add another layer by defining reusable business calculations directly in dbt. They encapsulate logic, such as revenue or average order value definitions, which can be consistently applied across multiple models.

Exposures connect the lineage graph to external tools, documenting how transformed data powers dashboards, reports, or machine learning models.

At the technical level, lineage relies on two functions:

- source() marks the raw origin of data. Models referencing a source become dependent nodes.

- ref() links one model to another, ensuring dbt executes dependencies in the right order.

Together, these elements make lineage in dbt both explicit and automated, giving teams a clear, verifiable map of data movement from ingestion to business use.

Data Lineage Challenges in dbt

As dbt projects grow in size and complexity, maintaining clear and usable lineage becomes more difficult. Two of the main challenges are scaling large DAGs and capturing lineage at the column level.

Scaling Data Pipelines

When the number of models, sources, seeds, macros, and exposures increases into the thousands, the lineage graph (DAG) can become overwhelming. Large DAGs make it harder to spot inefficiencies, duplicated logic, or violations of best practices.

Without a structured approach, teams may find it difficult to audit or even navigate the graph effectively. To manage this, projects need strong naming conventions, consistent documentation, and automated tools like the dbt project evaluator to surface issues that would be impractical to check manually.

Column-Level Lineage

Most lineage systems, including dbt’s DAG, operate at the table level. This works well for understanding dependencies but falls short when teams need visibility into how individual columns are created and transformed. For example, if a metric is derived from several joined or pivoted tables, table-level lineage alone does not reveal the exact transformations applied to the fields.

As organizations demand more detailed transparency, column-level lineage becomes essential for debugging, auditing, and ensuring trust in data definitions. Features like column-level lineage in dbt Explorer are designed to close this gap, offering more granular insight into data flows.

Together, these challenges highlight the importance of balancing project structure, tooling, and detail level to keep lineage useful rather than overwhelming.

Best Practices for Robust Data Lineage with dbt

1. Automate Lineage Capture and Maintenance

Automation is essential for reliable data lineage, especially in rapidly evolving dbt projects. By leveraging dbt’s built-in DAG, teams can ensure that lineage is captured automatically as part of the development process. This reduces manual effort, prevents outdated documentation, and eliminates the risk of missing critical dependencies. Scheduled builds and documentation generation help keep lineage information current, even as the project scales and evolves.

Automating lineage maintenance also involves integrating linting or continuous integration checks that validate changes to models and dependencies. When developers propose new models or modify SQL logic, automated checks can warn about missing references or potential impacts on downstream tables. These guardrails enable teams to catch issues earlier in the development lifecycle, minimizing the risk of breaking existing workflows.

2. Ensure Both Upstream and Downstream Visibility

Complete lineage documentation requires visibility into both upstream sources and downstream consumers. dbt makes it easy to trace upstream dependencies through the use of references, but teams should also document where outputs are consumed—whether by dashboards, APIs, or data science workflows. This dual perspective enables a full understanding of impact, which is necessary for safely managing changes and troubleshooting failures.

With clear upstream and downstream lineage, teams can perform true impact analysis before making modifications. For example, changing a parameter upstream can trigger an evaluation of all affected downstream reports, preventing surprises in production. This end-to-end visibility is essential for organizations looking to maintain high reliability and accountability in their analytics stack.

3. Enrich Lineage with Business Context

Technical lineage provides visibility into the mechanics of data transformation, but adding business context greatly increases its value. Enriching lineage with metadata such as data owner, business glossary terms, and usage notes enables stakeholders to understand not just how data is processed, but why it exists and what it represents. dbt’s documentation blocks and metadata features make it possible to annotate models and columns directly within the codebase.

Business context facilitates collaboration between technical and non-technical staff, ensuring data consumers know whether a dataset is fit for purpose. Tagging sensitive or regulated fields, for instance, helps meet compliance requirements and supports better governance. When business context is paired with technical lineage, organizations can bridge the gap between raw data and strategic decision-making.

4. Integrate Lineage with Change Management and Alerts

Integrating lineage with change management processes ensures that all modifications to data models and pipelines are assessed for potential impact before being merged or deployed. dbt’s integration with version control systems and CI/CD pipelines can be leveraged to automate validation, flag risks, and require peer reviews for changes affecting critical dependencies. By aligning lineage with change management, broken pipelines and downstream disruptions can be minimized.

Real-time alerts tied to lineage-aware checks further enhance resilience. For example, if a source table is unexpectedly altered, automated alerts can notify responsible teams and identify all impacted models, dashboards, or reports. Proactive notification of schema or dependency changes allows teams to react quickly and maintain trust in data deliveries without relying solely on manual reviews or spot checks.

5. Track Lineage of dbt Model Usage with Exposures

Lineage in dbt is not limited to transformations inside the warehouse; it should also extend to tracking how models are consumed. Many teams stop at documenting dependencies between models, but without visibility into where outputs are used, impact analysis remains incomplete.

Exposures in dbt provide a structured way to capture this usage. By defining exposures for dashboards, reports, or machine learning jobs that depend on dbt models, teams can formally document the connection between transformations and business outcomes. This makes it possible to trace the effect of a model change not just on downstream models, but on the actual decision-making tools used by stakeholders.

Beyond exposures, tracking lineage of dbt model usage can be enriched by integrating dbt with observability platforms that log all queries against warehouse tables. These tools reveal which teams, queries, or applications access a model, giving engineers evidence of actual consumption rather than assumed dependencies.

Orchestrating dbt Data Pipelines with Dagster

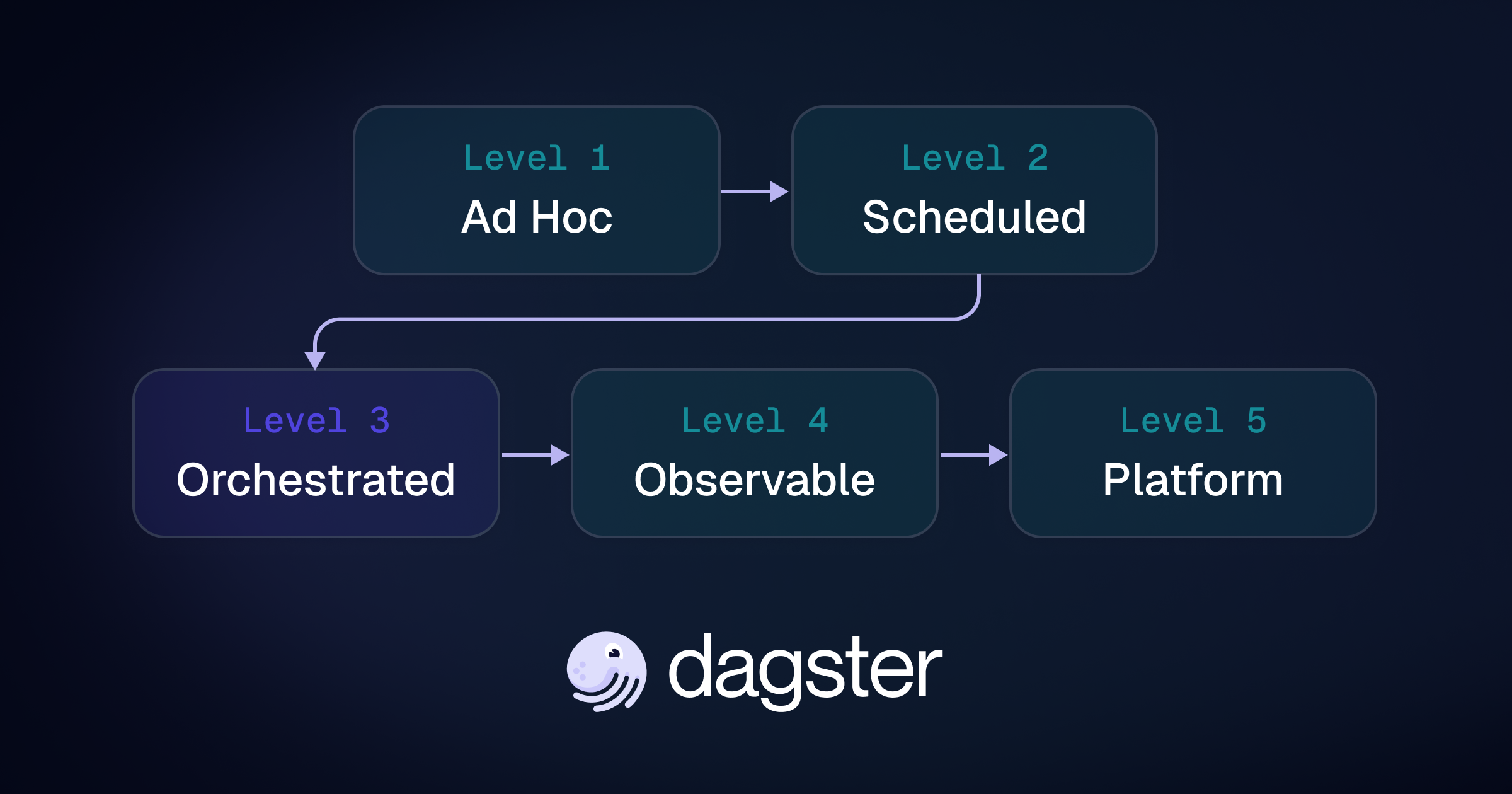

Dagster is an open-source data orchestration platform with first-class support for orchestrating dbt pipelines. As a general-purpose orchestrator, Dagster allows you to scale beyond dbt and track the lineage of not only your SQL transformations but also your entire data platform.

It offers teams a unified control plane for not only dbt assets, but also ingestion, transformation, and AI workflows. With a Python-native approach, it unifies SQL, Python, and more into a single testable and observable platform.

Best of all, you don’t have to choose between Dagster and dbt Cloud™ — start by integrating Dagster with existing dbt projects to unlock better scheduling, lineage, and observability. Learn more by heading to the docs on Dagster’s integration with dbt and dbt Cloud.

.jpg)

.png)

.jpg)

.png)