What Is Data Engineering Software?

Data engineering software refers to tools and platforms to manage the collection, transformation, storage, and delivery of data across an organization. These solutions enable businesses to gather data from various sources, process it efficiently, and store it in formats appropriate for downstream analytics, reporting, or machine learning tasks. The software aims to automate and simplify data flow, reduce human errors, and ensure scalability.

Data engineering software includes data orchestrators like Dagster, platforms such as BigQuery and Apache Spark for processing large datasets, data streaming tools such as Apache Kafka, and data transformation tools such as dbt (Data Build Tool).

Modern data engineering software supports complex data pipelines, integrates well with existing systems, and provides interfaces for monitoring and debugging workflows. It aids in making data accessible, consistent, and reliable.

Data Engineering Software vs. Data Engineering Frameworks

Data engineering software and frameworks serve different but related purposes. Software platforms are full-featured products designed to cover the end-to-end data lifecycle. They typically include graphical interfaces, built-in connectors, monitoring tools, and governance features, making them suitable for enterprise adoption. Examples include Apache NiFi or Prefect, which provide ready-to-use orchestration, security, and management capabilities.

Frameworks are lower-level libraries or toolkits that give engineers building blocks to implement custom solutions. They provide APIs, abstractions, and runtime environments rather than complete packaged workflows. Apache Spark and Hadoop are examples: they handle distributed data processing but require additional setup, integration, and orchestration to become production-ready.

Software platforms often embed or extend frameworks. For example, a data engineering tool may use Spark under the hood for transformations but expose higher-level controls for scheduling, error handling, and monitoring. Choosing between the two depends on whether an organization prioritizes flexibility and customization (frameworks) or operational simplicity and enterprise support (software).

Core Functions of Data Engineering Software

Data Ingestion and Integration

Data ingestion is the process of collecting and importing data from various sources, such as databases, APIs, files, and streaming services, into a centralized system. Data engineering software must handle a wide range of data formats and protocols to support structured, semi-structured, and unstructured data. Integration capabilities are essential to connect disparate systems, enabling flow between different platforms, cloud environments, and data warehouses.

Effective data integration ensures that data from multiple sources is made available in a unified format. This usually involves processes like cleaning, deduplication, and schema mapping. Without ingestion and integration, organizations risk inconsistencies and fragmented views of their data, which can hinder analysis and disrupt operations.

Transformation and Enrichment

Once data is ingested, it often requires transformation to fit analytical or business requirements. This can involve formatting, normalizing, aggregating, or joining datasets from different sources. Data engineering software provides transformation features through code, visual interfaces, or built-in functions, allowing organizations to customize the flow and structure of their data pipelines as needed.

Enrichment processes add context or value to raw data by merging external information, performing lookups, or deriving new attributes. This step is vital for delivering high-quality, ready-to-use datasets, improving the accuracy of analytics and downstream applications. Transformation and enrichment functions enable consistent, clean, and insightful data delivery.

Storage and Retrieval

Data engineering software manages persistent storage solutions that support efficient data retrieval and durability. These systems can range from cloud object storage and distributed file systems to NoSQL and relational databases. Choosing the right storage depends on access patterns, scalability needs, and the requirements of downstream consumers.

Efficient storage strategies ensure data is available, resilient, and structured for easy retrieval. The software supports indexing, partitioning, and caching mechanisms that optimize query performance and minimize latency. Storage and fast retrieval are critical for meeting service-level agreements and supporting diverse analytics workloads.

Orchestration and Workflow Management

Orchestration refers to the automated coordination of tasks and dependencies within data pipelines. Data engineering software provides workflow management tools that define task sequences, schedule executions, and monitor progress. These tools handle error recovery, retries, and dependency resolution, ensuring pipeline reliability without constant manual intervention.

Workflow management solutions offer visual interfaces or configuration-driven designs for building, configuring, and maintaining complex pipelines. They help teams track lineage, understand process bottlenecks, and debug failures efficiently.

Monitoring and Governance

Monitoring capabilities in data engineering software provide real-time visibility into pipeline health, performance metrics, failure alerts, and resource utilization. This enables teams to identify issues proactively and ensure data flows operate within expected parameters. Granular logging and alerting are crucial for minimizing downtime and meeting compliance requirements.

Data governance features establish clear ownership, access controls, lineage tracking, and policy enforcement across datasets. These capabilities help organizations maintain data integrity, privacy, and auditability. Governance and monitoring reduce risks, support regulatory compliance, and foster trust in the organization’s data assets.

Notable Data Engineering Software

Data Orchestration

1. Dagster

.webp)

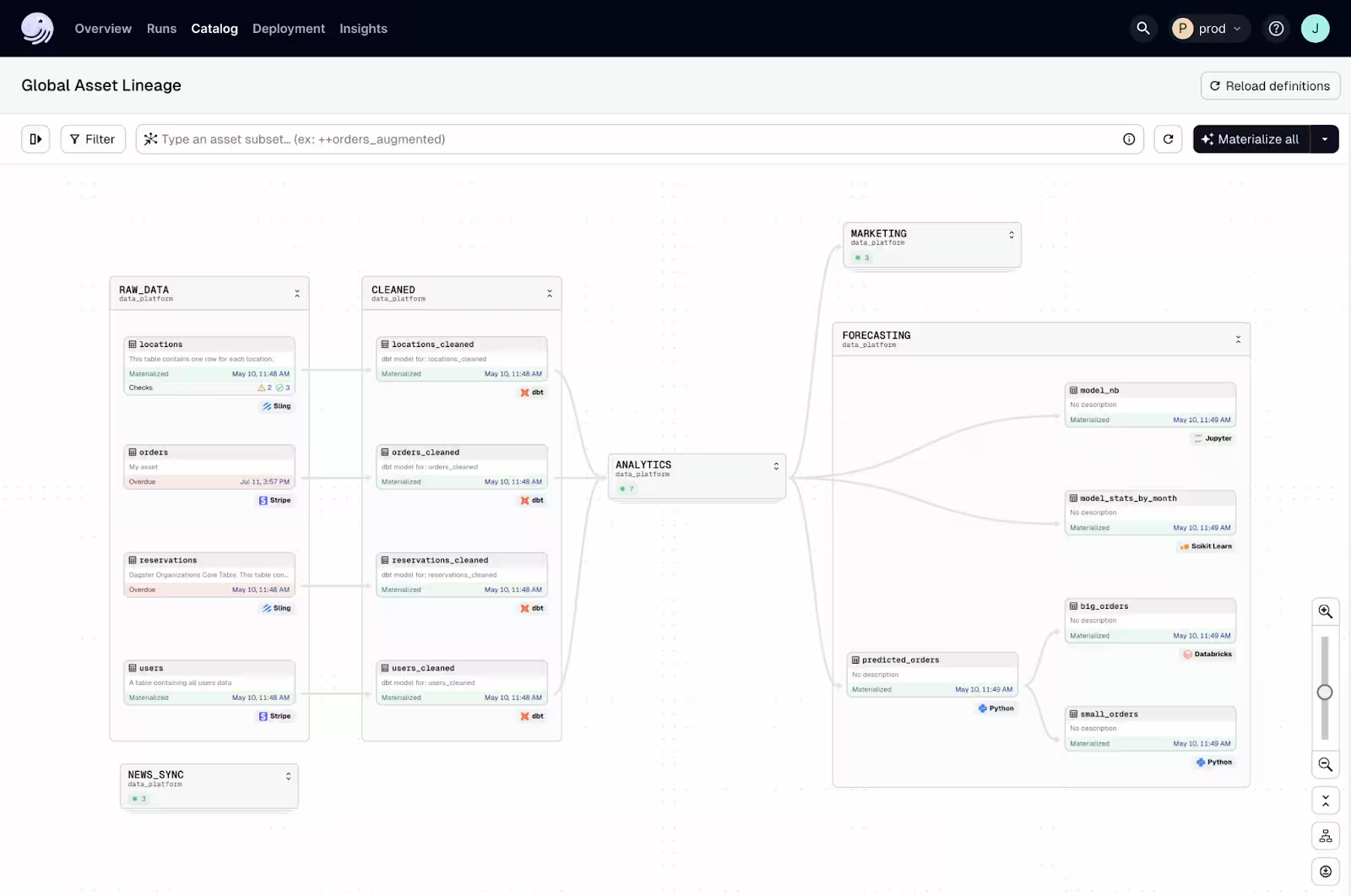

Dagster is a modern data orchestration platform designed for building, testing, and operating reliable data pipelines. It takes a software engineering–first approach to data engineering, emphasizing declarative pipeline definitions, strong typing, observability, and asset-centric modeling. Dagster helps teams manage complex workflows across batch, streaming, analytics, and machine learning use cases while maintaining visibility into data quality and lineage.

Unlike traditional task-based orchestrators, Dagster centers pipelines around data assets, the tables, files, models, and datasets that teams ultimately care about, rather than just task execution order. This approach improves maintainability, testing, and cross-team collaboration.

Key features include:

- Asset-centric orchestration: Pipelines are defined as software-defined assets with explicit dependencies, enabling clear lineage and impact analysis.

- Declarative data modeling: Assets, schedules, and resources are defined in Python with strong typing and structured configuration.

- Built-in observability: The Dagster UI provides visibility into runs, logs, asset health, and automatically captured lineage.

- Data quality checks: Native asset checks and freshness policies help ensure reliable and trustworthy datasets.

Dagster+ and Compass: Dagster is available as open source and as Dagster+, a managed cloud offering with governance, alerting, and hybrid deployment support. Dagster Compass provides centralized asset discovery, ownership tracking, and health visibility across teams.

2. Prefect

.webp)

Prefect is a workflow orchestration tool to simplify and scale data pipeline management with a focus on Python-native development. Unlike traditional orchestrators, Prefect emphasizes developer ergonomics and observability by allowing users to define workflows as code without the overhead of rigid DAG structures.

Key features include:

- Python-first workflow definition: Pipelines are written in pure Python using decorators and flow functions, offering full flexibility and reusability without needing YAML or separate configuration files.

- DAG-free execution model: Prefect uses a task dependency graph, but allows dynamic branching, loops, and conditions that aren’t easily expressed in static DAGs.

- Observability and logging: Offers centralized logging, metrics, and real-time visibility into task states through both Prefect Cloud and open-source UI.

- Fault tolerance and retries: Supports automatic retries, caching, and state management, making workflows more resilient to failure.

- Flexible deployment options: Can run on local machines, in Kubernetes, on cloud VMs, or as a managed service via Prefect Cloud, depending on infrastructure needs.

3. Apache Airflow

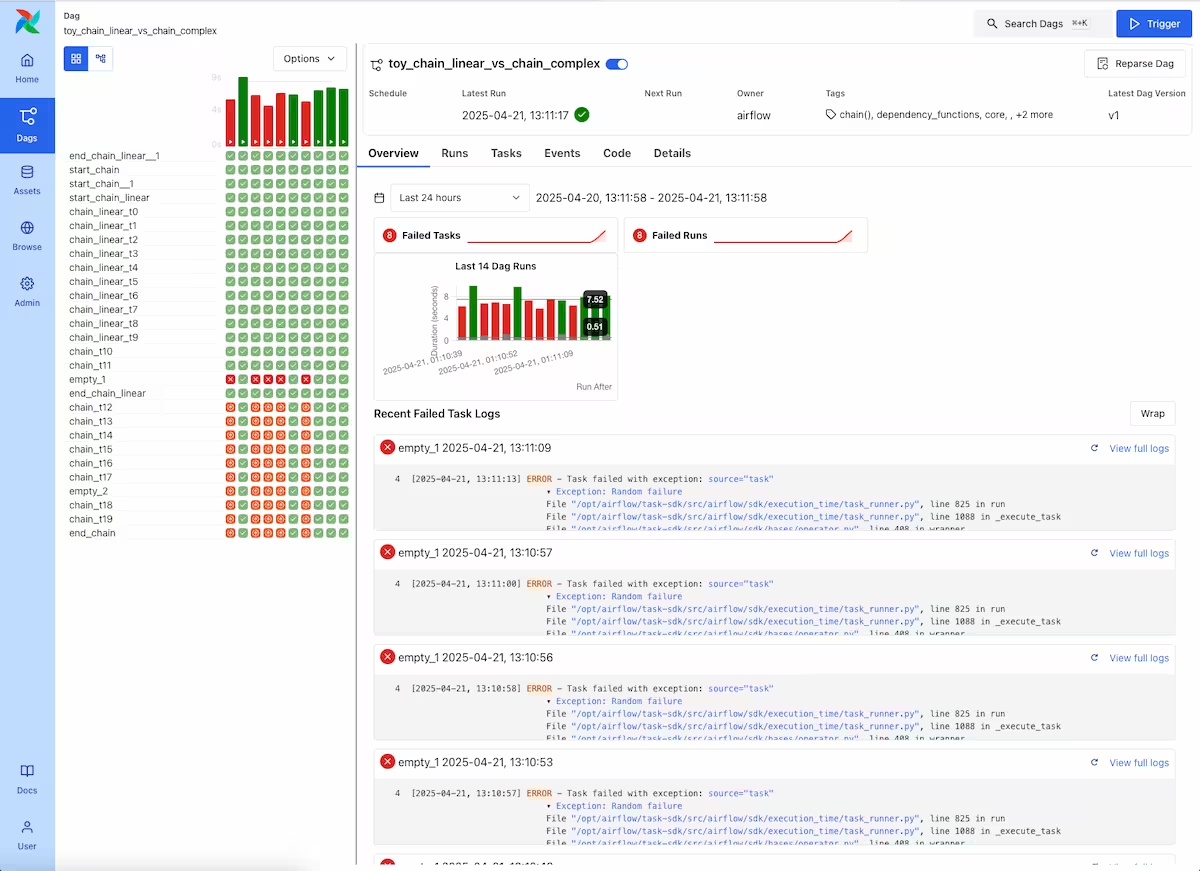

Apache Airflow is an open-source workflow orchestration tool to help engineers programmatically author, schedule, and monitor data pipelines. Built in Python, it allows developers to define workflows as code using directed acyclic graphs (DAGs). Each DAG represents a pipeline where tasks are nodes and dependencies are edges.

Key features include:

- Python-based configuration: Workflows are defined using Python scripts, enabling dynamic pipeline creation and reuse of existing libraries and logic.

- DAG-centric design: Uses DAGs to represent workflows, allowing precise control over task execution order and dependency resolution.

- Flexible scheduling: Supports both time-based scheduling (e.g. hourly, daily) and event-driven triggers (e.g. file arrival).

- Visual monitoring interface: Offers a web UI to track task execution, view logs, and monitor pipeline health.

Pluggable architecture: Integrates with custom operators, sensors, and hooks for working with external systems and services.

Related content: Read our guide to data orchestration

Big Data Processing

4. BigQuery

.webp)

BigQuery is a serverless data warehouse and analytics platform designed to support large-scale processing, machine learning, and real-time analytics within a unified environment. It enables SQL-based querying, integrates with open formats and frameworks like Spark and Apache Iceberg, and includes built-in support for AI and data governance.

Key features:

- AI-integrated SQL workflows: Run ML models, generate embeddings, and perform tasks like sentiment analysis using native SQL commands, without moving data.

- Serverless Spark support: Execute Spark jobs alongside SQL in a unified runtime, simplifying hybrid processing across batch and stream workloads.

- Built-in governance: Features like metadata harvesting, lineage tracking, and semantic search are integrated through Dataplex Universal Catalog.

- Streaming and batch ingestion: Supports data ingestion via batch loads, Pub/Sub streams, and change data capture with Datastream.

Scalable and decoupled architecture: Separates storage from compute, allowing cost-efficient, petabyte-scale analysis with auto-scaling and cross-region replication.

5. Apache Spark

Apache Spark is a distributed processing engine designed for large-scale data analytics, supporting batch, streaming, SQL, and machine learning workloads in a unified architecture. It works across clusters or single-node setups and is accessible via languages like Python, SQL, Scala, Java, and R.

Key features:

- Unified batch and streaming processing: Processes real-time and historical data using the same engine and APIs.

- High-performance SQL: Executes ANSI-compliant SQL queries with distributed processing for ad hoc and dashboard-style analytics.

- Scalable machine learning: Trains models on small datasets and scales seamlessly to cluster-based processing for larger workloads.

- Adaptive query execution: Dynamically adjusts execution plans for optimal performance at runtime, including join strategies and reducer settings.

Multi-language support: Offers native APIs in multiple languages, enabling flexible development and integration with various ecosystems.

6. Apache Flink

.webp)

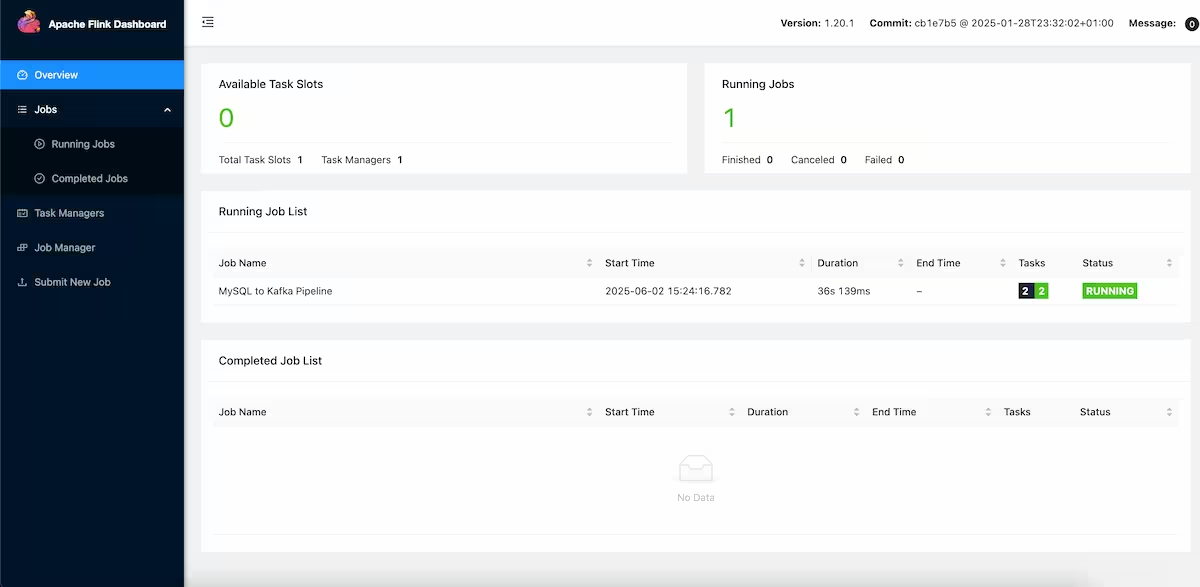

Apache Flink is a framework for building stateful stream and batch data processing applications. It supports low-latency, high-throughput operations and provides consistency guarantees, making it suitable for real-time analytics, ETL, and event-driven systems.

Key features:

- Stateful stream processing: Handles unbounded data streams with exactly-once consistency and event-time semantics.

- Layered APIs: Offers multiple abstraction levels including SQL, DataStream API, and ProcessFunctions for fine-grained time and state control.

- Scalable architecture: Supports large application state and scaling through features like incremental checkpoints and savepoints.

- Operational flexibility: Deploys on various cluster environments with high-availability setups and flexible configuration.

In-memory performance: Designed for low-latency and high-throughput workloads, using in-memory computing for faster execution.

Data Streaming

7. Apache Kafka

Apache Kafka is a distributed event streaming platform designed for building high-throughput, scalable, and fault-tolerant data pipelines. It supports mission-critical applications by ingesting, storing, and processing massive volumes of event data across clusters. Kafka includes native stream processing capabilities and integrates with data systems through Kafka Connect.

Key features:

- High throughput and low latency: Delivers millions of messages per second with latencies as low as 2ms across large clusters.

- Elastic scalability: Supports scaling to thousands of brokers and petabytes of data, with dynamic expansion and contraction of resources.

- Durable, fault-tolerant storage: Ensures persistent storage of event streams using a distributed, replicated log.

- Built-in stream processing: Enables operations like filtering, joins, and aggregations with event-time semantics and exactly-once guarantees.

- Extensive integrations: Connects to a wide ecosystem of sources and sinks via Kafka Connect, including databases, cloud storage, and search engines.

8. Apache Pulsar

Apache Pulsar is a distributed messaging and streaming platform built for cloud-native environments. It offers high-performance event streaming with multi-tenancy, geo-replication, and fine-grained resource isolation. Pulsar separates compute from storage, enabling flexible scaling and topic management across large deployments.

Key features:

- Low-latency messaging: Supports both message queues and stream processing with sub-10ms latency across hundreds of nodes.

- Geo-replication and failover: Replicates data across regions and supports automatic client failover to ensure availability.

- Multi-tenant architecture: Provides isolation and access control per tenant, allowing shared clusters across organizations.

- Dynamic scalability: Automatically balances load and redistributes hot topics without data reshuffling.

- Serverless processing: Supports native Pulsar Functions for lightweight message handling in Java, Go, or Python.

9. Amazon Kinesis

Amazon Kinesis is a fully managed streaming data service that enables real-time data ingestion, processing, and analysis at scale. It offers modular components for different workloads, including Kinesis Data Streams for ingesting high-volume data, Kinesis Data Firehose for delivery to destinations, and Managed Flink for real-time analytics.

Key features:

- Multiple data stream types: Includes services for video, data streams, and serverless delivery pipelines to data lakes and analytics platforms.

- Real-time processing: Processes and analyzes incoming data immediately without waiting for full batch completion.

- Scalable and serverless: Handles high-throughput workloads with no server provisioning, automatically scaling to match data volume.

- AWS ecosystem integration: Seamlessly connects with Amazon S3, Redshift, Lambda, and other AWS services for storage, analytics, and processing.

Flink-based analytics: Offers a managed Apache Flink service for building custom stream processing applications with low-latency results.

Data Transformation

10. dbt

.webp)

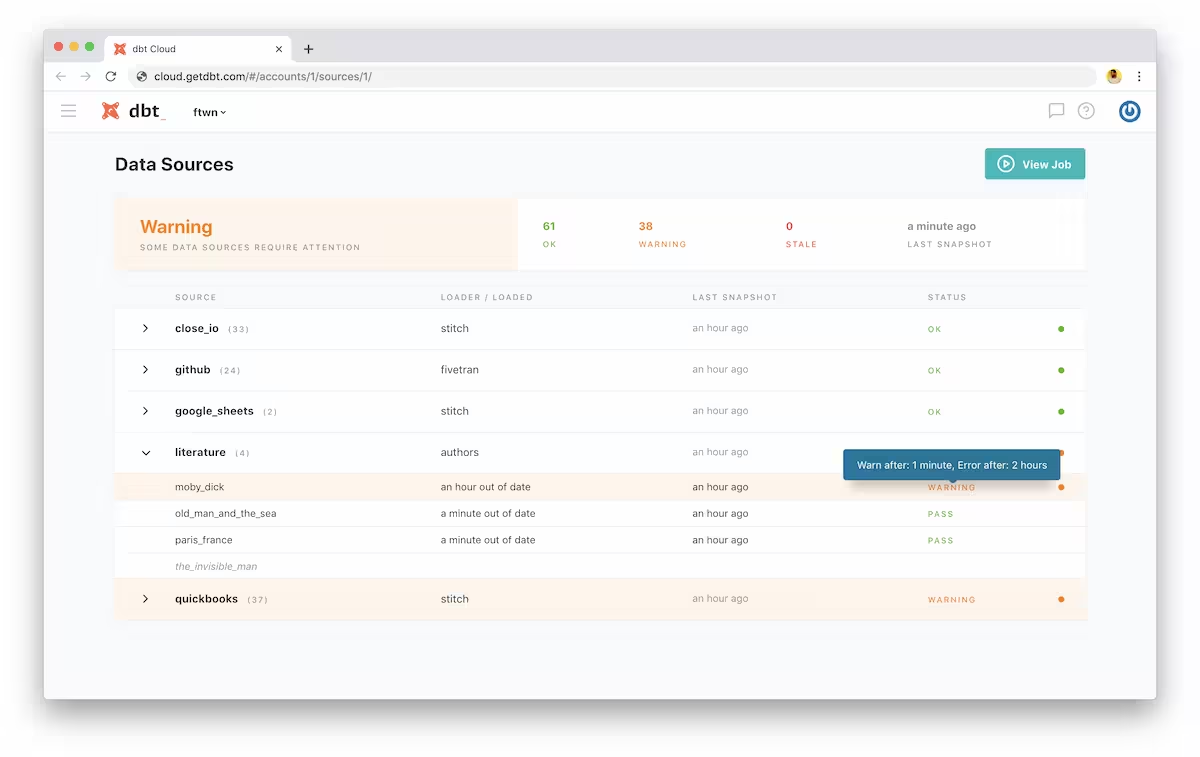

dbt is a data transformation platform that enables teams to turn raw data into reliable, analytics-ready datasets using just SQL. It brings software engineering practices like version control, CI/CD, and testing into analytics workflows. dbt acts as the control plane for structured data, centralizing business logic, automating workflows.

Key features include:

- SQL-based transformation: Allow analysts to build and maintain data models directly in SQL, simplifying the development process while maintaining full control over transformation logic.

- Built-in orchestration: Automate and schedule the execution of data pipelines, enabling smooth deployment cycles and reducing operational overhead.

- Data observability: Proactively monitor pipeline health with built-in testing, alerts, and metadata signals to catch issues before they impact downstream consumers.

- Semantic layer: Define and manage business metrics, making them accessible to different BI tools and large language models (LLMs) with consistent logic and definitions.

Lineage and cataloging: Visualize data lineage and explore metadata across the warehouse, improving context, documentation, and debugging workflows.

11. Matillion

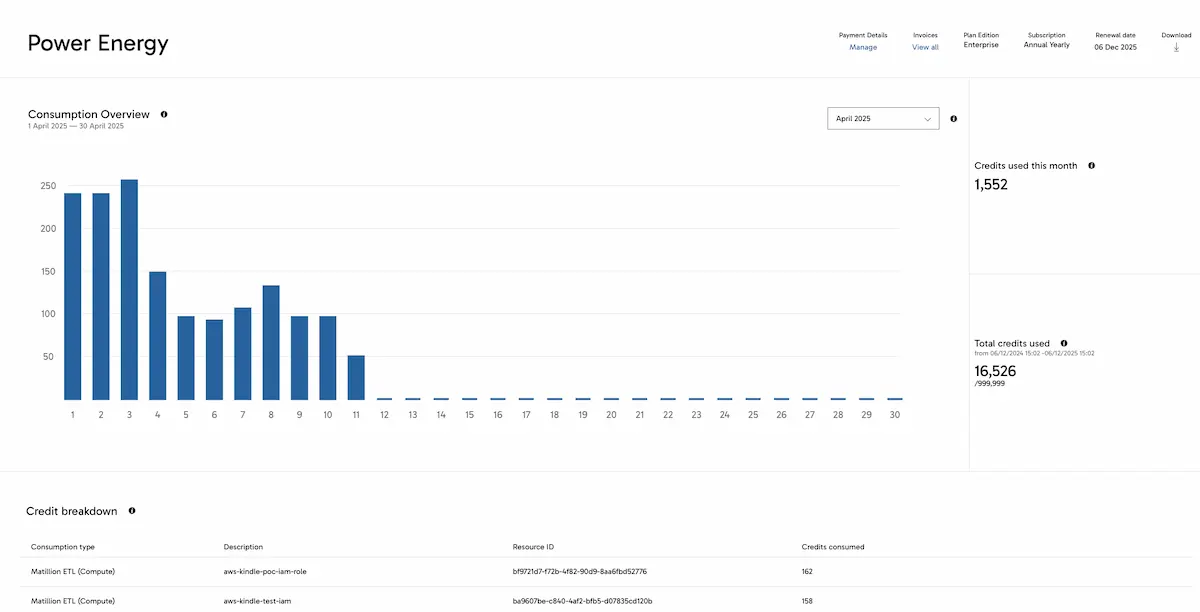

Matillion is a cloud-native data transformation platform that helps teams build and manage pipelines with both code-optional and no-code workflows. It supports a range of data sources and cloud destinations, and offers tools to automate transformation, manage pipeline execution, and integrate AI capabilities.

Key features:

- Code-optional transformations: Supports both no-code and high-code options for building ETL workflows, making it accessible to different skill levels.

- Pipeline automation: Automates pipeline execution, error handling, and task scheduling to reduce manual overhead and speed up delivery.

- Wide data connectivity: Provides pre-built connectors for systems like Salesforce, SAP, PostgreSQL, Amazon S3, and custom sources.

- Centralized management: Offers a single platform for visibility into all pipelines, along with monitoring and task automation.

Security and compliance: Built with enterprise-grade security to meet compliance needs across cloud environments.

12. Talend

Talend, now part of Qlik, offers a unified platform for data integration, transformation, and governance. It supports multi-modal data processing and flexible deployment across cloud, on-premises, or hybrid environments. Talend is intended to deliver trusted, analytics-ready data pipelines through visual tools, code-based options, and AI augmentation.

Key features:

- Multi-modal integration: Handles ETL, ELT, batch, real-time, and API-based data flows within a single platform.

- Flexible transformation tooling: Offers visual low-code interfaces and full-code support, enabling a wide range of user roles to transform data.

- Built-in data quality and governance: Includes features like lineage tracking, reusable data products, and policy enforcement for trust and transparency.

- AI-augmented pipelines: Uses AI to assist in building, optimizing, and maintaining pipelines that support analytics and machine learning workloads.

Hybrid deployment support: Runs in cloud, on-premises, or hybrid environments, providing flexibility for compliance and infrastructure constraints.

.avif)

Related content: Read our guide to data engineering tools

Considerations for Choosing Data Engineering Software

Selecting the right data engineering software depends on a variety of organizational, technical, and operational factors. The following considerations can help teams evaluate tools that best fit their data architecture, use cases, and team capabilities:

- Pipeline complexity and flexibility: Choose tools that can handle the scale and complexity of workflows. If the pipelines involve branching logic, variable dependencies, or frequent changes, prioritize solutions with dynamic DAG support, parameterization, and modular configurations.

- Integration ecosystem: Ensure compatibility with existing systems: databases, cloud platforms, APIs, and third-party services. Strong integration support reduces implementation overhead and ensures data movement across tools.

- Latency and throughput requirements: Some use cases demand low-latency or near-real-time processing (e.g. stream analytics), while others are batch-oriented. Match the software to the performance characteristics of data workloads.

- Observability and debugging tools: Effective monitoring, alerting, and logging are essential for maintaining pipeline reliability. Choose tools with built-in support for visualizing data lineage, tracking task execution, and capturing failures.

- Operational scalability: Assess how well the software scales with growing data volumes and concurrent workloads. Look for features like horizontal scaling, distributed execution, and efficient resource management.

- Team skill set and usability: Consider who will be building and maintaining pipelines. Some tools are code-heavy and suitable for engineers, while others offer low-code or visual interfaces suited for broader data teams.

- Governance and security: Support for role-based access control, encryption, auditing, and data lineage is crucial, especially in regulated industries. Ensure the tool aligns with the organization’s security and compliance policies.

- Cost and licensing model: Evaluate the total cost of ownership, including licensing, cloud infrastructure, support, and maintenance. Open-source tools may reduce software costs but can increase the need for internal support.

- Community and ecosystem support: Tools with strong community backing, comprehensive documentation, and active development tend to evolve faster and provide better support through forums, plugins, and integrations.

Conclusion

Data engineering software provides the building blocks to move, transform, and govern data reliably at scale. The right stack standardizes pipeline development, enforces quality controls, and automates operations, so teams can deliver trusted datasets to analytics and AI with predictable cost and performance. Choosing tools that match workload patterns, skills, and governance needs leads to simpler architectures and faster time to value.

.jpg)

.png)

.jpg)

.png)